Model selection

Model selection is the task of selecting a statistical model from a set of candidate models, given data. In the simplest cases, a pre-existing set of data is considered. However, the task can also involve the design of experiments such that the data collected is well-suited to the problem of model selection. Given candidate models of similar predictive or explanatory power, the simplest model is most likely to be the best choice (Occam's razor).

Konishi & Kitagawa (2008, p. 75) state, "The majority of the problems in statistical inference can be considered to be problems related to statistical modeling". Relatedly, Cox (2006, p. 197) has said, "How [the] translation from subject-matter problem to statistical model is done is often the most critical part of an analysis".

Model selection may also refer to the problem of selecting a few representative models from a large set of computational models for the purpose of decision making or optimization under uncertainty. [1]

Introduction

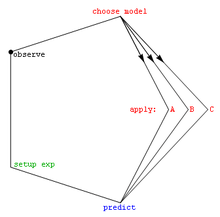

In its most basic forms, model selection is one of the fundamental tasks of scientific inquiry. Determining the principle that explains a series of observations is often linked directly to a mathematical model predicting those observations. For example, when Galileo performed his inclined plane experiments, he demonstrated that the motion of the balls fitted the parabola predicted by his model .

Of the countless number of possible mechanisms and processes that could have produced the data, how can one even begin to choose the best model? The mathematical approach commonly taken decides among a set of candidate models; this set must be chosen by the researcher. Often simple models such as polynomials are used, at least initially . Burnham & Anderson (2002) emphasize throughout their book the importance of choosing models based on sound scientific principles, such as understanding of the phenomenological processes or mechanisms (e.g., chemical reactions) underlying the data.

Once the set of candidate models has been chosen, the statistical analysis allows us to select the best of these models. What is meant by best is controversial. A good model selection technique will balance goodness of fit with simplicity . More complex models will be better able to adapt their shape to fit the data (for example, a fifth-order polynomial can exactly fit six points), but the additional parameters may not represent anything useful. (Perhaps those six points are really just randomly distributed about a straight line.) Goodness of fit is generally determined using a likelihood ratio approach, or an approximation of this, leading to a chi-squared test. The complexity is generally measured by counting the number of parameters in the model.

Model selection techniques can be considered as estimators of some physical quantity, such as the probability of the model producing the given data. The bias and variance are both important measures of the quality of this estimator; efficiency is also often considered.

A standard example of model selection is that of curve fitting, where, given a set of points and other background knowledge (e.g. points are a result of i.i.d. samples), we must select a curve that describes the function that generated the points.

Methods to assist in choosing the set of candidate models

- Data transformation (statistics)

- Exploratory data analysis

- Model specification

- Scientific method

Criteria

Below is a list of criteria for model selection. The most commonly used criteria are (i) the Akaike information criterion and (ii) the Bayes factor and/or the Bayesian information criterion (which to some extent approximates the Bayes factor).

- Akaike information criterion (AIC), a measure of the goodness fit of an estimated statistical model

- Bayes factor

- Bayesian information criterion (BIC), also known as the Schwarz information criterion, a statistical criterion for model selection

- Cross-validation

- Deviance information criterion (DIC), another Bayesian oriented model selection criterion

- False discovery rate

- Focused information criterion (FIC), a selection criterion sorting statistical models by their effectiveness for a given focus parameter

- Hannan–Quinn information criterion, an alternative to the Akaike and Bayesian criteria

- Kashyap information criterion (KIC) is a powerful alternative to AIC and BIC, because KIC uses Fisher information matrix

- Likelihood-ratio test

- Mallows's Cp

- Minimum description length

- Minimum message length (MML)

- PRESS statistic, also known as the PRESS criterion

- Structural risk minimization

- Stepwise regression

- Watanabe–Akaike information criterion (WAIC), also called the widely applicable information criterion

- Extended Bayesian Information Criterion (EBIC) is an extension of ordinary Bayesian information criterion (BIC) for models with high parameter spaces.

- Extended Fisher Information Criterion (EFIC) is a model selection criterion for linear regression models.

Among these criteria, cross-validation is typically the most accurate, and computationally the most expensive, for supervised learning problems.

Burnham & Anderson (2002, §6.3) say the following (with wikilinks added).

There is a variety of model selection methods. However, from the point of view of statistical performance of a method, and intended context of its use, there are only two distinct classes of methods: These have been labeled efficient and consistent. .... Under the frequentist paradigm for model selection one generally has three main approaches: (I) optimization of some selection criteria, (II) tests of hypotheses, and (III) ad hoc methods.

See also

- All models are wrong

- Analysis of competing hypotheses

- Automated machine learning (AutoML)

- Bias-variance dilemma

- Feature selection

- Freedman's paradox

- Grid search

- Identifiability Analysis

- Log-linear analysis

- Model identification

- Occam's razor

- Optimal design

- Parameter identification problem

- Scientific modelling

- Statistical model validation

- Stein's paradox

Notes

- Shirangi, Mehrdad G.; Durlofsky, Louis J. (2016). "A general method to select representative models for decision making and optimization under uncertainty". Computers & Geosciences. 96: 109–123. Bibcode:2016CG.....96..109S. doi:10.1016/j.cageo.2016.08.002.

References

- Aho, K.; Derryberry, D.; Peterson, T. (2014), "Model selection for ecologists: the worldviews of AIC and BIC", Ecology, 95 (3): 631–636, doi:10.1890/13-1452.1, PMID 24804445

- Akaike, H. (1994), "Implications of informational point of view on the development of statistical science", in Bozdogan, H. (ed.), Proceedings of the First US/JAPAN Conference on The Frontiers of Statistical Modeling: An Informational Approach—Volume 3, Kluwer Academic Publishers, pp. 27–38

- Anderson, D.R. (2008), Model Based Inference in the Life Sciences, Springer, ISBN 9780387740751

- Ando, T. (2010), Bayesian Model Selection and Statistical Modeling, CRC Press, ISBN 9781439836156

- Breiman, L. (2001), "Statistical modeling: the two cultures", Statistical Science, 16: 199–231, doi:10.1214/ss/1009213726

- Burnham, K.P.; Anderson, D.R. (2002), Model Selection and Multimodel Inference: A Practical Information-Theoretic Approach (2nd ed.), Springer-Verlag, ISBN 0-387-95364-7 [this has over 38000 citations on Google Scholar]

- Chamberlin, T.C. (1890), "The method of multiple working hypotheses", Science, 15 (366): 92–6, Bibcode:1890Sci....15R..92., doi:10.1126/science.ns-15.366.92, PMID 17782687 (reprinted 1965, Science 148: 754–759 doi:10.1126/science.148.3671.754)

- Claeskens, G. (2016), "Statistical model choice" (PDF), Annual Review of Statistics and Its Application, 3 (1): 233–256, Bibcode:2016AnRSA...3..233C, doi:10.1146/annurev-statistics-041715-033413

- Claeskens, G.; Hjort, N.L. (2008), Model Selection and Model Averaging, Cambridge University Press, ISBN 9781139471800

- Cox, D.R. (2006), Principles of Statistical Inference, Cambridge University Press

- Kashyap, R.L. (1982), "Optimal choice of AR and MA parts in autoregressive moving average models", IEEE Transactions on Pattern Analysis and Machine Intelligence, IEEE, PAMI-4 (2): 99–104, doi:10.1109/TPAMI.1982.4767213

- Konishi, S.; Kitagawa, G. (2008), Information Criteria and Statistical Modeling, Springer, Bibcode:2007icsm.book.....K, ISBN 9780387718866

- Lahiri, P. (2001), Model Selection, Institute of Mathematical Statistics

- Leeb, H.; Pötscher, B. M. (2009), "Model selection", in Anderson, T. G. (ed.), Handbook of Financial Time Series, Springer, pp. 889–925, doi:10.1007/978-3-540-71297-8_39, ISBN 978-3-540-71296-1

- Lukacs, P. M.; Thompson, W. L.; Kendall, W. L.; Gould, W. R.; Doherty, P. F. Jr.; Burnham, K. P.; Anderson, D. R. (2007), "Concerns regarding a call for pluralism of information theory and hypothesis testing", Journal of Applied Ecology, 44 (2): 456–460, doi:10.1111/j.1365-2664.2006.01267.x

- McQuarrie, Allan D. R.; Tsai, Chih-Ling (1998), Regression and Time Series Model Selection, Singapore: World Scientific, ISBN 981-02-3242-X

- Massart, P. (2007), Concentration Inequalities and Model Selection, Springer

- Massart, P. (2014), "A non-asymptotic walk in probability and statistics", in Lin, Xihong (ed.), Past, Present, and Future of Statistical Science, Chapman & Hall, p. 309–321

- Navarro, D. J. (2019), "Between the Devil and the Deep Blue Sea: Tensions between scientific judgement and statistical model selection", Computational Brain & Behavior, 2: 28–34, doi:10.1007/s42113-018-0019-z

- Resende, Paulo Angelo Alves; Dorea, Chang Chung Yu (2016), "Model identification using the Efficient Determination Criterion", Journal of Multivariate Analysis, 150: 229–244, arXiv:1409.7441, doi:10.1016/j.jmva.2016.06.002

- Shmueli, G. (2010), "To explain or to predict?", Statistical Science, 25 (3): 289–310, arXiv:1101.0891, doi:10.1214/10-STS330, MR 2791669

- Wit, E.; van den Heuvel, E.; Romeijn, J.-W. (2012), "'All models are wrong...': an introduction to model uncertainty" (PDF), Statistica Neerlandica, 66 (3): 217–236, doi:10.1111/j.1467-9574.2012.00530.x

- Wit, E.; McCullagh, P. (2001), Viana, M. A. G.; Richards, D. St. P. (eds.), "The extendibility of statistical models", Algebraic Methods in Statistics and Probability, pp. 327–340

- Wójtowicz, Anna; Bigaj, Tomasz (2016), "Justification, confirmation, and the problem of mutually exclusive hypotheses", in Kuźniar, Adrian; Odrowąż-Sypniewska, Joanna (eds.), Uncovering Facts and Values, Brill Publishers, pp. 122–143, doi:10.1163/9789004312654_009, ISBN 9789004312654

- Owrang, Arash; Jansson, Magnus (2018), "A Model Selection Criterion for High-Dimensional Linear Regression", IEEE Transactions on Signal Processing , 66: 3436–3446, doi:10.1109/TSP.2018.2821628, ISSN 1941-0476