Remote sensing

Remote sensing is the acquisition of information about an object or phenomenon without making physical contact with the object and thus in contrast to on-site observation, especially the Earth. Remote sensing is used in numerous fields, including geography, land surveying and most Earth science disciplines (for example, hydrology, ecology, meteorology, oceanography, glaciology, geology); it also has military, intelligence, commercial, economic, planning, and humanitarian applications.

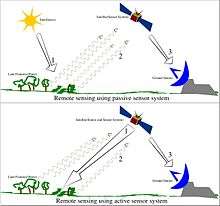

In current usage, the term "remote sensing" generally refers to the use of satellite or aircraft-based sensor technologies to detect and classify objects on Earth. It includes the surface and the atmosphere and oceans, based on propagated signals (e.g. electromagnetic radiation). It may be split into "active" remote sensing (when a signal is emitted by a satellite or aircraft to the object and its reflection detected by the sensor) and "passive" remote sensing (when the reflection of sunlight is detected by the sensor).[1][2][3][4][5]

Overview

Passive sensors gather radiation that is emitted or reflected by the object or surrounding areas. Reflected sunlight is the most common source of radiation measured by passive sensors. Examples of passive remote sensors include film photography, infrared, charge-coupled devices, and radiometers. Active collection, on the other hand, emits energy in order to scan objects and areas whereupon a sensor then detects and measures the radiation that is reflected or backscattered from the target. RADAR and LiDAR are examples of active remote sensing where the time delay between emission and return is measured, establishing the location, speed and direction of an object.

Remote sensing makes it possible to collect data of dangerous or inaccessible areas. Remote sensing applications include monitoring deforestation in areas such as the Amazon Basin, glacial features in Arctic and Antarctic regions, and depth sounding of coastal and ocean depths. Military collection during the Cold War made use of stand-off collection of data about dangerous border areas. Remote sensing also replaces costly and slow data collection on the ground, ensuring in the process that areas or objects are not disturbed.

Orbital platforms collect and transmit data from different parts of the electromagnetic spectrum, which in conjunction with larger scale aerial or ground-based sensing and analysis, provides researchers with enough information to monitor trends such as El Niño and other natural long and short term phenomena. Other uses include different areas of the earth sciences such as natural resource management, agricultural fields such as land usage and conservation,[6][7], oil spill detection and monitoring [8], and national security and overhead, ground-based and stand-off collection on border areas.[9]

Types of data acquisition techniques

The basis for multispectral collection and analysis is that of examined areas or objects that reflect or emit radiation that stand out from surrounding areas. For a summary of major remote sensing satellite systems see the overview table.

Applications of remote sensing

- Conventional radar is mostly associated with aerial traffic control, early warning, and certain large scale meteorological data. Doppler radar is used by local law enforcements’ monitoring of speed limits and in enhanced meteorological collection such as wind speed and direction within weather systems in addition to precipitation location and intensity. Other types of active collection includes plasmas in the ionosphere. Interferometric synthetic aperture radar is used to produce precise digital elevation models of large scale terrain (See RADARSAT, TerraSAR-X, Magellan).

- Laser and radar altimeters on satellites have provided a wide range of data. By measuring the bulges of water caused by gravity, they map features on the seafloor to a resolution of a mile or so. By measuring the height and wavelength of ocean waves, the altimeters measure wind speeds and direction, and surface ocean currents and directions.

- Ultrasound (acoustic) and radar tide gauges measure sea level, tides and wave direction in coastal and offshore tide gauges.

- Light detection and ranging (LIDAR) is well known in examples of weapon ranging, laser illuminated homing of projectiles. LIDAR is used to detect and measure the concentration of various chemicals in the atmosphere, while airborne LIDAR can be used to measure heights of objects and features on the ground more accurately than with radar technology. Vegetation remote sensing is a principal application of LIDAR.

- Radiometers and photometers are the most common instrument in use, collecting reflected and emitted radiation in a wide range of frequencies. The most common are visible and infrared sensors, followed by microwave, gamma ray and rarely, ultraviolet. They may also be used to detect the emission spectra of various chemicals, providing data on chemical concentrations in the atmosphere.

- Radiometers are also used at night, because artificial light emissions are a key signature of human activity.[10] Applications include remote sensing of population, GDP, and damage to infrastructure from war or disasters.

- Spectropolarimetric Imaging has been reported to be useful for target tracking purposes by researchers at the U.S. Army Research Laboratory. They determined that manmade items possess polarimetric signatures that are not found in natural objects. These conclusions were drawn from the imaging of military trucks, like the Humvee, and trailers with their acousto-optic tunable filter dual hyperspectral and spectropolarimetric VNIR Spectropolarimetric Imager.[11][12]

- Stereographic pairs of aerial photographs have often been used to make topographic maps by imagery and terrain analysts in trafficability and highway departments for potential routes, in addition to modelling terrestrial habitat features.[13][14][15]

- Simultaneous multi-spectral platforms such as Landsat have been in use since the 1970s. These thematic mappers take images in multiple wavelengths of electro-magnetic radiation (multi-spectral) and are usually found on Earth observation satellites, including (for example) the Landsat program or the IKONOS satellite. Maps of land cover and land use from thematic mapping can be used to prospect for minerals, detect or monitor land usage, detect invasive vegetation, deforestation, and examine the health of indigenous plants and crops, including entire farming regions or forests.[4][1] Prominent scientists using remote sensing for this purpose include Janet Franklin and Ruth DeFries. Landsat images are used by regulatory agencies such as KYDOW to indicate water quality parameters including Secchi depth, chlorophyll a density and total phosphorus content. Weather satellites are used in meteorology and climatology.

- Hyperspectral imaging produces an image where each pixel has full spectral information with imaging narrow spectral bands over a contiguous spectral range. Hyperspectral imagers are used in various applications including mineralogy, biology, defence, and environmental measurements.

- Within the scope of the combat against desertification, remote sensing allows researchers to follow up and monitor risk areas in the long term, to determine desertification factors, to support decision-makers in defining relevant measures of environmental management, and to assess their impacts.[16]

Geodetic

- Geodetic remote sensing can be gravimetric or geometric. Overhead gravity data collection was first used in aerial submarine detection. This data revealed minute perturbations in the Earth's gravitational field that may be used to determine changes in the mass distribution of the Earth, which in turn may be used for geophysical studies, as in GRACE. Geometric remote sensing includes position and deformation imaging using InSAR, LIDAR, etc.[17]

Acoustic and near-acoustic

- Sonar: passive sonar, listening for the sound made by another object (a vessel, a whale etc.); active sonar, emitting pulses of sounds and listening for echoes, used for detecting, ranging and measurements of underwater objects and terrain.

- Seismograms taken at different locations can locate and measure earthquakes (after they occur) by comparing the relative intensity and precise timings.

- Ultrasound: Ultrasound sensors, that emit high frequency pulses and listening for echoes, used for detecting water waves and water level, as in tide gauges or for towing tanks.

To coordinate a series of large-scale observations, most sensing systems depend on the following: platform location and the orientation of the sensor. High-end instruments now often use positional information from satellite navigation systems. The rotation and orientation is often provided within a degree or two with electronic compasses. Compasses can measure not just azimuth (i. e. degrees to magnetic north), but also altitude (degrees above the horizon), since the magnetic field curves into the Earth at different angles at different latitudes. More exact orientations require gyroscopic-aided orientation, periodically realigned by different methods including navigation from stars or known benchmarks.

Data characteristics

The quality of remote sensing data consists of its spatial, spectral, radiometric and temporal resolutions.

- Spatial resolution

- The size of a pixel that is recorded in a raster image – typically pixels may correspond to square areas ranging in side length from 1 to 1,000 metres (3.3 to 3,280.8 ft).

- Spectral resolution

- The wavelength of the different frequency bands recorded – usually, this is related to the number of frequency bands recorded by the platform. Current Landsat collection is that of seven bands, including several in the infrared spectrum, ranging from a spectral resolution of 0.7 to 2.1 μm. The Hyperion sensor on Earth Observing-1 resolves 220 bands from 0.4 to 2.5 μm, with a spectral resolution of 0.10 to 0.11 μm per band.

- Radiometric resolution

- The number of different intensities of radiation the sensor is able to distinguish. Typically, this ranges from 8 to 14 bits, corresponding to 256 levels of the gray scale and up to 16,384 intensities or "shades" of colour, in each band. It also depends on the instrument noise.

- Temporal resolution

- The frequency of flyovers by the satellite or plane, and is only relevant in time-series studies or those requiring an averaged or mosaic image as in deforesting monitoring. This was first used by the intelligence community where repeated coverage revealed changes in infrastructure, the deployment of units or the modification/introduction of equipment. Cloud cover over a given area or object makes it necessary to repeat the collection of said location.

Data processing

In order to create sensor-based maps, most remote sensing systems expect to extrapolate sensor data in relation to a reference point including distances between known points on the ground. This depends on the type of sensor used. For example, in conventional photographs, distances are accurate in the center of the image, with the distortion of measurements increasing the farther you get from the center. Another factor is that of the platen against which the film is pressed can cause severe errors when photographs are used to measure ground distances. The step in which this problem is resolved is called georeferencing, and involves computer-aided matching of points in the image (typically 30 or more points per image) which is extrapolated with the use of an established benchmark, "warping" the image to produce accurate spatial data. As of the early 1990s, most satellite images are sold fully georeferenced.

In addition, images may need to be radiometrically and atmospherically corrected.

- Radiometric correction

- Allows avoidance of radiometric errors and distortions. The illumination of objects on the Earth surface is uneven because of different properties of the relief. This factor is taken into account in the method of radiometric distortion correction.[18] Radiometric correction gives a scale to the pixel values, e. g. the monochromatic scale of 0 to 255 will be converted to actual radiance values.

- Topographic correction (also called terrain correction)

- In rugged mountains, as a result of terrain, the effective illumination of pixels varies considerably. In a remote sensing image, the pixel on the shady slope receives weak illumination and has a low radiance value, in contrast, the pixel on the sunny slope receives strong illumination and has a high radiance value. For the same object, the pixel radiance value on the shady slope will be different from that on the sunny slope. Additionally, different objects may have similar radiance values. These ambiguities seriously affected remote sensing image information extraction accuracy in mountainous areas. It became the main obstacle to further application of remote sensing images. The purpose of topographic correction is to eliminate this effect, recovering the true reflectivity or radiance of objects in horizontal conditions. It is the premise of quantitative remote sensing application.

- Atmospheric correction

- Elimination of atmospheric haze by rescaling each frequency band so that its minimum value (usually realised in water bodies) corresponds to a pixel value of 0. The digitizing of data also makes it possible to manipulate the data by changing gray-scale values.

Interpretation is the critical process of making sense of the data. The first application was that of aerial photographic collection which used the following process; spatial measurement through the use of a light table in both conventional single or stereographic coverage, added skills such as the use of photogrammetry, the use of photomosaics, repeat coverage, Making use of objects’ known dimensions in order to detect modifications. Image Analysis is the recently developed automated computer-aided application which is in increasing use.

Object-Based Image Analysis (OBIA) is a sub-discipline of GIScience devoted to partitioning remote sensing (RS) imagery into meaningful image-objects, and assessing their characteristics through spatial, spectral and temporal scale.

Old data from remote sensing is often valuable because it may provide the only long-term data for a large extent of geography. At the same time, the data is often complex to interpret, and bulky to store. Modern systems tend to store the data digitally, often with lossless compression. The difficulty with this approach is that the data is fragile, the format may be archaic, and the data may be easy to falsify. One of the best systems for archiving data series is as computer-generated machine-readable ultrafiche, usually in typefonts such as OCR-B, or as digitized half-tone images. Ultrafiches survive well in standard libraries, with lifetimes of several centuries. They can be created, copied, filed and retrieved by automated systems. They are about as compact as archival magnetic media, and yet can be read by human beings with minimal, standardized equipment.

Generally speaking, remote sensing works on the principle of the inverse problem: while the object or phenomenon of interest (the state) may not be directly measured, there exists some other variable that can be detected and measured (the observation) which may be related to the object of interest through a calculation. The common analogy given to describe this is trying to determine the type of animal from its footprints. For example, while it is impossible to directly measure temperatures in the upper atmosphere, it is possible to measure the spectral emissions from a known chemical species (such as carbon dioxide) in that region. The frequency of the emissions may then be related via thermodynamics to the temperature in that region.

Data processing levels

To facilitate the discussion of data processing in practice, several processing "levels" were first defined in 1986 by NASA as part of its Earth Observing System[19] and steadily adopted since then, both internally at NASA (e. g.,[20]) and elsewhere (e. g.,[21]); these definitions are:

| Level | Description |

|---|---|

| 0 | Reconstructed, unprocessed instrument and payload data at full resolution, with any and all communications artifacts (e. g., synchronization frames, communications headers, duplicate data) removed. |

| 1a | Reconstructed, unprocessed instrument data at full resolution, time-referenced, and annotated with ancillary information, including radiometric and geometric calibration coefficients and georeferencing parameters (e. g., platform ephemeris) computed and appended but not applied to the Level 0 data (or if applied, in a manner that level 0 is fully recoverable from level 1a data). |

| 1b | Level 1a data that have been processed to sensor units (e. g., radar backscatter cross section, brightness temperature, etc.); not all instruments have Level 1b data; level 0 data is not recoverable from level 1b data. |

| 2 | Derived geophysical variables (e. g., ocean wave height, soil moisture, ice concentration) at the same resolution and location as Level 1 source data. |

| 3 | Variables mapped on uniform spacetime grid scales, usually with some completeness and consistency (e. g., missing points interpolated, complete regions mosaicked together from multiple orbits, etc.). |

| 4 | Model output or results from analyses of lower level data (i. e., variables that were not measured by the instruments but instead are derived from these measurements). |

A Level 1 data record is the most fundamental (i. e., highest reversible level) data record that has significant scientific utility, and is the foundation upon which all subsequent data sets are produced. Level 2 is the first level that is directly usable for most scientific applications; its value is much greater than the lower levels. Level 2 data sets tend to be less voluminous than Level 1 data because they have been reduced temporally, spatially, or spectrally. Level 3 data sets are generally smaller than lower level data sets and thus can be dealt with without incurring a great deal of data handling overhead. These data tend to be generally more useful for many applications. The regular spatial and temporal organization of Level 3 datasets makes it feasible to readily combine data from different sources.

While these processing levels are particularly suitable for typical satellite data processing pipelines, other data level vocabularies have been defined and may be appropriate for more heterogeneous workflows.

History

The modern discipline of remote sensing arose with the development of flight. The balloonist G. Tournachon (alias Nadar) made photographs of Paris from his balloon in 1858.[22] Messenger pigeons, kites, rockets and unmanned balloons were also used for early images. With the exception of balloons, these first, individual images were not particularly useful for map making or for scientific purposes.

Systematic aerial photography was developed for military surveillance and reconnaissance purposes beginning in World War I[23] and reaching a climax during the Cold War with the use of modified combat aircraft such as the P-51, P-38, RB-66 and the F-4C, or specifically designed collection platforms such as the U2/TR-1, SR-71, A-5 and the OV-1 series both in overhead and stand-off collection.[24] A more recent development is that of increasingly smaller sensor pods such as those used by law enforcement and the military, in both manned and unmanned platforms. The advantage of this approach is that this requires minimal modification to a given airframe. Later imaging technologies would include infrared, conventional, Doppler and synthetic aperture radar.[25]

The development of artificial satellites in the latter half of the 20th century allowed remote sensing to progress to a global scale as of the end of the Cold War.[26] Instrumentation aboard various Earth observing and weather satellites such as Landsat, the Nimbus and more recent missions such as RADARSAT and UARS provided global measurements of various data for civil, research, and military purposes. Space probes to other planets have also provided the opportunity to conduct remote sensing studies in extraterrestrial environments, synthetic aperture radar aboard the Magellan spacecraft provided detailed topographic maps of Venus, while instruments aboard SOHO allowed studies to be performed on the Sun and the solar wind, just to name a few examples.[27][28]

Recent developments include, beginning in the 1960s and 1970s with the development of image processing of satellite imagery. Several research groups in Silicon Valley including NASA Ames Research Center, GTE, and ESL Inc. developed Fourier transform techniques leading to the first notable enhancement of imagery data. In 1999 the first commercial satellite (IKONOS) collecting very high resolution imagery was launched.[29]

Training and education

Remote Sensing has a growing relevance in the modern information society. It represents a key technology as part of the aerospace industry and bears increasing economic relevance – new sensors e.g. TerraSAR-X and RapidEye are developed constantly and the demand for skilled labour is increasing steadily. Furthermore, remote sensing exceedingly influences everyday life, ranging from weather forecasts to reports on climate change or natural disasters. As an example, 80% of the German students use the services of Google Earth; in 2006 alone the software was downloaded 100 million times. But studies have shown that only a fraction of them know more about the data they are working with.[30] There exists a huge knowledge gap between the application and the understanding of satellite images. Remote sensing only plays a tangential role in schools, regardless of the political claims to strengthen the support for teaching on the subject.[31] A lot of the computer software explicitly developed for school lessons has not yet been implemented due to its complexity. Thereby, the subject is either not at all integrated into the curriculum or does not pass the step of an interpretation of analogue images. In fact, the subject of remote sensing requires a consolidation of physics and mathematics as well as competences in the fields of media and methods apart from the mere visual interpretation of satellite images.

Many teachers have great interest in the subject "remote sensing", being motivated to integrate this topic into teaching, provided that the curriculum is considered. In many cases, this encouragement fails because of confusing information.[32] In order to integrate remote sensing in a sustainable manner organizations like the EGU or Digital Earth[33] encourage the development of learning modules and learning portals. Examples include: FIS – Remote Sensing in School Lessons,[34] Geospektiv,[35] Ychange,[36] or Spatial Discovery,[37] to promote media and method qualifications as well as independent learning.

Software

Remote sensing data are processed and analyzed with computer software, known as a remote sensing application. A large number of proprietary and open source applications exist to process remote sensing data. Remote sensing software packages include:

- ERDAS IMAGINE from Hexagon Geospatial (Separated from Intergraph SG&I),

- ENVI from Harris GeospatialSolutions,

- PCI Geomatica

- TNTmips from MicroImages,

- IDRISI from Clark Labs,

- eCognition from Trimble,

- and RemoteView made by Overwatch Textron Systems.

- Dragon/ips is one of the oldest remote sensing packages still available, and is in some cases free.

Open source remote sensing software includes:

- Opticks (software),

- Orfeo toolbox

- Sentinel Application Platform (SNAP) from the European Space Agency (ESA)

- Others mixing remote sensing and GIS capabilities are: GRASS GIS, ILWIS, QGIS, and TerraLook.

According to an NOAA Sponsored Research by Global Marketing Insights, Inc. the most used applications among Asian academic groups involved in remote sensing are as follows: ERDAS 36% (ERDAS IMAGINE 25% & ERMapper 11%); ESRI 30%; ITT Visual Information Solutions ENVI 17%; MapInfo 17%.

Among Western Academic respondents as follows: ESRI 39%, ERDAS IMAGINE 27%, MapInfo 9%, and AutoDesk 7%.

In education, those that want to go beyond simply looking at satellite images print-outs either use general remote sensing software (e.g. QGIS), Google Earth, StoryMaps or a software/ web-app developed specifically for education (e.g. desktop: LeoWorks, online: BLIF).

Satellites

See also

- Aerial photography

- Airborne Real-time Cueing Hyperspectral Enhanced Reconnaissance

- Archaeological imagery

- Cartography

- CLidar

- Coastal management

- Crateology

- Full spectral imaging

- Geography

- Geographic information system (GIS)

- GIS and hydrology

- Geoinformatics

- Geophysical survey

- Global Positioning System (GPS)

- Hyperspectral

- IEEE Geoscience and Remote Sensing Society

- Imagery analysis

- Imaging science

- Land cover

- Liquid crystal tunable filter

- List of Earth observation satellites

- Mobile mapping

- Multispectral pattern recognition

- National Center for Remote Sensing, Air and Space Law

- National LIDAR Dataset

- Normalized difference water index

- Orthophoto

- Pictometry

- Radiometry

- Remote monitoring and control

- Remote sensing (archaeology)

- Remote sensing satellite and data overview

- Satellite imagery

- Sonar

- Space probe

- TopoFlight

- Vector Map

References

- Ran, Lingyan; Zhang, Yanning; Wei, Wei; Zhang, Qilin (23 October 2017). "A Hyperspectral Image Classification Framework with Spatial Pixel Pair Features". Sensors. 17 (10): 2421. doi:10.3390/s17102421. PMC 5677443. PMID 29065535.

- Schowengerdt, Robert A. (2007). Remote sensing: models and methods for image processing (3rd ed.). Academic Press. p. 2. ISBN 978-0-12-369407-2.

- Schott, John Robert (2007). Remote sensing: the image chain approach (2nd ed.). Oxford University Press. p. 1. ISBN 978-0-19-517817-3.

- Guo, Huadong; Huang, Qingni; Li, Xinwu; Sun, Zhongchang; Zhang, Ying (2013). "Spatiotemporal analysis of urban environment based on the vegetation–impervious surface–soil model" (PDF). Journal of Applied Remote Sensing. 8: 084597. Bibcode:2014JARS....8.4597G. doi:10.1117/1.JRS.8.084597.

- Liu, Jian Guo & Mason, Philippa J. (2009). Essential Image Processing for GIS and Remote Sensing. Wiley-Blackwell. p. 4. ISBN 978-0-470-51032-2.

- "Saving the monkeys". SPIE Professional. Retrieved 1 January 2016.

- Howard, A.; et al. (19 August 2015). "Remote sensing and habitat mapping for bearded capuchin monkeys (Sapajus libidinosus): landscapes for the use of stone tools". Journal of Applied Remote Sensing. 9 (1): 096020. doi:10.1117/1.JRS.9.096020.

- C. Bayindir; J. D. Frost; C. F. Barnes (January 2018). "Assessment and enhancement of SAR noncoherent change detection of sea-surface oil spills". IEEE J. Ocean. Eng. 43 (1): 211–220. doi:10.1109/JOE.2017.2714818.

- "Archived copy". Archived from the original on 29 September 2006. Retrieved 18 February 2009.CS1 maint: archived copy as title (link)

- Levin, Noam; Kyba, Christopher C.M.; Zhang, Qingling; Sánchez de Miguel, Alejandro; Román, Miguel O.; Li, Xi; Portnov, Boris A.; Molthan, Andrew L.; Jechow, Andreas; Miller, Steven D.; Wang, Zhuosen; Shrestha, Ranjay M.; Elvidge, Christopher D. (February 2020). "Remote sensing of night lights: A review and an outlook for the future". Remote Sensing of Environment. 237: 111443. Bibcode:2020RSEnv.237k1443L. doi:10.1016/j.rse.2019.111443.

- Goldberg, A.; Stann, B.; Gupta, N. (July 2003). "Multispectral, Hyperspectral, and Three-Dimensional Imaging Research at the U.S. Army Research Laboratory" (PDF). Proceedings of the International Conference on International Fusion [6th]. 1: 499–506.

- Makki, Ihab; Younes, Rafic; Francis, Clovis; Bianchi, Tiziano; Zucchetti, Massimo (1 February 2017). "A survey of landmine detection using hyperspectral imaging". ISPRS Journal of Photogrammetry and Remote Sensing. 124: 40–53. Bibcode:2017JPRS..124...40M. doi:10.1016/j.isprsjprs.2016.12.009. ISSN 0924-2716.

- Mills, J.P.; et al. (1997). "Photogrammetry from Archived Digital Imagery for Seal Monitoring". The Photogrammetric Record. 15 (89): 715–724. doi:10.1111/0031-868X.00080.

- Twiss, S.D.; et al. (2001). "Topographic spatial characterisation of grey seal Halichoerus grypus breeding habitat at a sub-seal size spatial grain". Ecography. 24 (3): 257–266. doi:10.1111/j.1600-0587.2001.tb00198.x.

- Stewart, J.E.; et al. (2014). "Finescale ecological niche modeling provides evidence that lactating gray seals (Halichoerus grypus) prefer access to fresh water in order to drink" (PDF). Marine Mammal Science. 30 (4): 1456–1472. doi:10.1111/mms.12126.

- Begni G. Escadafal R. Fontannaz D. and Hong-Nga Nguyen A.-T. (2005). Remote sensing: a tool to monitor and assess desertification. Les dossiers thématiques du CSFD. Issue 2. 44 pp.

- Geodetic Imaging

- Grigoriev А.N. (2015). "Мethod of radiometric distortion correction of multispectral data for the earth remote sensing". Scientific and Technical Journal of Information Technologies, Mechanics and Optics. 15 (4): 595–602. doi:10.17586/2226-1494-2015-15-4-595-602.

- NASA (1986), Report of the EOS data panel, Earth Observing System, Data and Information System, Data Panel Report, Vol. IIa., NASA Technical Memorandum 87777, June 1986, 62 pp. Available at http://hdl.handle.net/2060/19860021622

- C. L. Parkinson, A. Ward, M. D. King (Eds.) Earth Science Reference Handbook – A Guide to NASA’s Earth Science Program and Earth Observing Satellite Missions, National Aeronautics and Space Administration Washington, D. C. Available at http://eospso.gsfc.nasa.gov/ftp_docs/2006ReferenceHandbook.pdf Archived 15 April 2010 at the Wayback Machine

- GRAS-SAF (2009), Product User Manual, GRAS Satellite Application Facility, Version 1.2.1, 31 March 2009. Available at http://www.grassaf.org/general-documents/products/grassaf_pum_v121.pdf

- Maksel, Rebecca. "Flight of the Giant". Air & Space Magazine. Retrieved 19 February 2019.

- IWM, Alan Wakefield

Head of photographs at (4 April 2014). "A bird's-eye view of the battlefield: aerial photography". Daily Telegraph. ISSN 0307-1235. Retrieved 19 February 2019. - "Air Force Magazine". www.airforcemag.com. Retrieved 19 February 2019.

- "Military Imaging and Surveillance Technology (MIST)". www.darpa.mil. Retrieved 19 February 2019.

- "The Indian Society pf International Law - Newsletter: VOL. 15, No. 4, October - December 2016". doi:10.1163/2210-7975_hrd-9920-2016004. Cite journal requires

|journal=(help) - "In Depth | Magellan". Solar System Exploration: NASA Science. Retrieved 19 February 2019.

- Garner, Rob (15 April 2015). "SOHO - Solar and Heliospheric Observatory". NASA. Retrieved 19 February 2019.

- Colen, Jerry (8 April 2015). "Ames Research Center Overview". NASA. Retrieved 19 February 2019.

- Ditter, R., Haspel, M., Jahn, M., Kollar, I., Siegmund, A., Viehrig, K., Volz, D., Siegmund, A. (2012) Geospatial technologies in school – theoretical concept and practical implementation in K-12 schools. In: International Journal of Data Mining, Modelling and Management (IJDMMM): FutureGIS: Riding the Wave of a Growing Geospatial Technology Literate Society; Vol. X

- Stork, E.J., Sakamoto, S.O., and Cowan, R.M. (1999) "The integration of science explorations through the use of earth images in middle school curriculum", Proc. IEEE Trans. Geosci. Remote Sensing 37, 1801–1817

- Bednarz, S.W. and Whisenant, S.E. (2000) "Mission geography: linking national geography standards, innovative technologies and NASA", Proc. IGARSS, Honolulu, USA, 2780–2782 8

- Digital Earth

- FIS – Remote Sensing in School Lessons

- geospektiv

- YCHANGE

- Landmap – Spatial Discovery

Further reading

- Campbell, J. B. (2002). Introduction to remote sensing (3rd ed.). The Guilford Press. ISBN 978-1-57230-640-0.

- Jensen, J. R. (2007). Remote sensing of the environment: an Earth resource perspective (2nd ed.). Prentice Hall. ISBN 978-0-13-188950-7.

- Jensen, J. R. (2005). Digital Image Processing: a Remote Sensing Perspective (3rd ed.). Prentice Hall.

- Lentile, Leigh B.; Holden, Zachary A.; Smith, Alistair M. S.; Falkowski, Michael J.; Hudak, Andrew T.; Morgan, Penelope; Lewis, Sarah A.; Gessler, Paul E.; Benson, Nate C. (2006). "Remote sensing techniques to assess active fire characteristics and post-fire effects". International Journal of Wildland Fire. 3 (15): 319–345. doi:10.1071/WF05097.

- Lillesand, T. M.; R. W. Kiefer; J. W. Chipman (2003). Remote sensing and image interpretation (5th ed.). Wiley. ISBN 978-0-471-15227-9.

- Richards, J. A.; X. Jia (2006). Remote sensing digital image analysis: an introduction (4th ed.). Springer. ISBN 978-3-540-25128-6.

- US Army FM series.

- US Army military intelligence museum, FT Huachuca, AZ

- Datla, R.U.; Rice, J.P.; Lykke, K.R.; Johnson, B.C.; Butler, J.J.; Xiong, X. (March–April 2011). "Best practice guidelines for pre-launch characterization and calibration of instruments for passive optical remote sensing". Journal of Research of the National Institute of Standards and Technology. 116 (2): 612–646. doi:10.6028/jres.116.009. PMC 4550341. PMID 26989588.

- Begni G., Escadafal R., Fontannaz D. and Hong-Nga Nguyen A.-T. (2005). Remote sensing: a tool to monitor and assess desertification. Les dossiers thématiques du CSFD. Issue 2. 44 pp.

- KUENZER, C. ZHANG, J., TETZLAFF, A., and S. DECH, 2013: Thermal Infrared Remote Sensing of Surface and underground Coal Fires. In (eds.) Kuenzer, C. and S. Dech 2013: Thermal Infrared Remote Sensing – Sensors, Methods, Applications. Remote Sensing and Digital Image Processing Series, Volume 17, 572 pp., ISBN 978-94-007-6638-9, pp. 429–451

- Kuenzer, C. and S. Dech 2013: Thermal Infrared Remote Sensing – Sensors, Methods, Applications. Remote Sensing and Digital Image Processing Series, Volume 17, 572 pp., ISBN 978-94-007-6638-9

- Lasaponara, R. and Masini N. 2012: Satellite Remote Sensing - A new tool for Archaeology. Remote Sensing and Digital Image Processing Series, Volume 16, 364 pp., ISBN 978-90-481-8801-7.

- Dupuis, C.; Lejeune, P.; Michez, A.; Fayolle, A. How Can Remote Sensing Help Monitor Tropical Moist Forest Degradation?—A Systematic Review. Remote Sens. 2020, 12, 1087. https://www.mdpi.com/2072-4292/12/7/1087

External links

- Remote Sensing at Curlie