Phoneme

In phonology and linguistics, a phoneme /ˈfoʊniːm/ is a unit of sound that distinguishes one word from another in a particular language.

For example, in most dialects of English, with the notable exception of the west midlands and the north-west of England,[1] the sound patterns /sɪn/ (sin) and /sɪŋ/ (sing) are two separate words that are distinguished by the substitution of one phoneme, /n/, for another phoneme, /ŋ/. Two words like this that differ in meaning through the contrast of a single phoneme form a minimal pair. If, in another language, any two sequences differing only by pronunciation of the final sounds [n] or [ŋ] are perceived as being the same in meaning, then these two sounds are interpreted as variants of a single phoneme in that language.

Phonemes that are established by the use of minimal pairs, such as tap vs tab or pat vs bat, are written between slashes: /p/, /b/. To show pronunciation, linguists use square brackets: [pʰ] (indicating an aspirated p in pat).

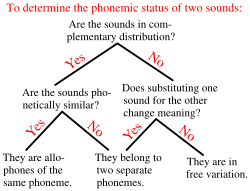

Within linguistics, there are differing views as to exactly what phonemes are and how a given language should be analyzed in phonemic (or phonematic) terms. However, a phoneme is generally regarded as an abstraction of a set (or equivalence class) of speech sounds (phones) that are perceived as equivalent to each other in a given language. For example, the English k sounds in the words kill and skill are not identical (as described below), but they are distributional variants of a single phoneme /k/. Speech sounds that differ but do not create a meaningful change in the word are known as allophones of the same phoneme. Allophonic variation may be conditioned, in which case a certain phoneme is realized as a certain allophone in particular phonological environments, or it may otherwise be free, and may vary by speaker or by dialect. Therefore, phonemes are often considered to constitute an abstract underlying representation for segments of words, while speech sounds make up the corresponding phonetic realization, or the surface form.

Notation

Phonemes are conventionally placed between slashes in transcription, whereas speech sounds (phones) are placed between square brackets. Thus, /pʊʃ/ represents a sequence of three phonemes, /p/, /ʊ/, /ʃ/ (the word push in Standard English), and [pʰʊʃ] represents the phonetic sequence of sounds [pʰ] (aspirated p), [ʊ], [ʃ] (the usual pronunciation of push). This should not be confused with the similar convention of the use of angle brackets to enclose the units of orthography, graphemes. For example, ⟨f⟩ represents the written letter (grapheme) f.

The symbols used for particular phonemes are often taken from the International Phonetic Alphabet (IPA), the same set of symbols most commonly used for phones. (For computer-typing purposes, systems such as X-SAMPA exist to represent IPA symbols using only ASCII characters.) However, descriptions of particular languages may use different conventional symbols to represent the phonemes of those languages. For languages whose writing systems employ the phonemic principle, ordinary letters may be used to denote phonemes, although this approach is often hampered by the complexity of the relationship between orthography and pronunciation (see § Correspondence between letters and phonemes below).

Assignment of speech sounds to phonemes

A phoneme is a sound or a group of different sounds perceived to have the same function by speakers of the language or dialect in question. An example is the English phoneme /k/, which occurs in words such as cat, kit, scat, skit. Although most native speakers do not notice this, in most English dialects, the "c/k" sounds in these words are not identical: in ![]()

![]()

The above shows that in English, [k] and [kʰ] are allophones of a single phoneme /k/. In some languages, however, [kʰ] and [k] are perceived by native speakers as different sounds, and substituting one for the other can change the meaning of a word. In those languages, therefore, the two sounds represent different phonemes. For example, in Icelandic, [kʰ] is the first sound of kátur, meaning "cheerful", but [k] is the first sound of gátur, meaning "riddles". Icelandic, therefore, has two separate phonemes /kʰ/ and /k/.

Minimal pairs

A pair of words like kátur and gátur (above) that differ only in one phone is called a minimal pair for the two alternative phones in question (in this case, [kʰ] and [k]). The existence of minimal pairs is a common test to decide whether two phones represent different phonemes or are allophones of the same phoneme.

To take another example, the minimal pair tip and dip illustrates that in English, [t] and [d] belong to separate phonemes, /t/ and /d/; since both words have different meanings, English-speakers must be conscious of the distinction between the two sounds.

In other languages, however, including Korean, both sounds [t] and [d] occur, but no such minimal pair exists. The lack of minimal pairs distinguishing [t] and [d] in Korean provides evidence that they are allophones of a single phoneme /t/. The word /tata/ is pronounced [tada], for example. That is, when they hear this word, Korean-speakers perceive the same sound in both the beginning and middle of the word, but English-speakers perceive different sounds in these two locations.

Signed languages, such as American Sign Language (ASL), also have minimal pairs, differing only in (exactly) one of the signs' parameters: handshape, movement, location, palm orientation, and nonmanual signal or marker. A minimal pair may exist in the signed language if the basic sign stays the same, but one of the parameters changes.[2]

However, the absence of minimal pairs for a given pair of phones does not always mean that they belong to the same phoneme: they may be so dissimilar phonetically that it is unlikely for speakers to perceive them as the same sound. For example, English has no minimal pair for the sounds [h] (as in hat) and [ŋ] (as in bang), and the fact that they can be shown to be in complementary distribution could be used to argue for their being allophones of the same phoneme. However, they are so dissimilar phonetically that they are considered separate phonemes.[3]

Phonologists have sometimes had recourse to "near minimal pairs" to show that speakers of the language perceive two sounds as significantly different even if no exact minimal pair exists in the lexicon. It is virtually impossible to find a minimal pair to distinguish English /ʃ/ from /ʒ/, yet it seems uncontroversial to claim that the two consonants are distinct phonemes. The two words 'pressure' /ˈprɛʃər/ and 'pleasure' /ˈplɛʒər/ can serve as a near minimal pair.[4]

Suprasegmental phonemes

Besides segmental phonemes such as vowels and consonants, there are also suprasegmental features of pronunciation (such as tone and stress, syllable boundaries and other forms of juncture, nasalization and vowel harmony), which, in many languages, can change the meaning of words and so are phonemic.

Phonemic stress is encountered in languages such as English. For example, the word invite stressed on the second syllable is a verb, but when stressed on the first syllable (without changing any of the individual sounds), it becomes a noun. The position of the stress in the word affects the meaning, so a full phonemic specification (providing enough detail to enable the word to be pronounced unambiguously) would include indication of the position of the stress: /ɪnˈvaɪt/ for the verb, /ˈɪnvaɪt/ for the noun. In other languages, such as French, word stress cannot have this function (its position is generally predictable) and is therefore not phonemic (and is not usually indicated in dictionaries).

Phonemic tones are found in languages such as Mandarin Chinese, in which a given syllable can have five different tonal pronunciations:

| 媽 | 麻 | 馬 | 罵 | 嗎 |

| mā | má | mǎ | mà | ma |

| mother | hemp | horse | scold | question particle |

Here, the character 媽 (pronounced mā, high level pitch) means "mother"; 麻 (má, rising pitch) means "hemp"; 馬 (mǎ, falling then rising) means "horse"; 罵 (mà, falling) means "scold", and 嗎 (ma, neutral tone) is an interrogative particle. The tone "phonemes" in such languages are sometimes called tonemes. Languages such as English do not have phonemic tone, although they use intonation for functions such as emphasis and attitude.

Distribution of allophones

When a phoneme has more than one allophone, the one actually heard at a given occurrence of that phoneme may be dependent on the phonetic environment (surrounding sounds) – allophones which normally cannot appear in the same environment are said to be in complementary distribution. In other cases the choice of allophone may be dependent on the individual speaker or other unpredictable factors – such allophones are said to be in free variation.

Background and related ideas

The term phonème (from Ancient Greek φώνημα phōnēma, "sound made, utterance, thing spoken, speech, language"[5]) was reportedly first used by A. Dufriche-Desgenettes in 1873, but it referred only to a speech sound. The term phoneme as an abstraction was developed by the Polish linguist Jan Niecisław Baudouin de Courtenay and his student Mikołaj Kruszewski during 1875–1895.[6] The term used by these two was fonema, the basic unit of what they called psychophonetics. Daniel Jones became the first linguist in the western world to use the term phoneme in its current sense, employing the word in his article "The phonetic structure of the Sechuana Language".[7] The concept of the phoneme was then elaborated in the works of Nikolai Trubetzkoy and others of the Prague School (during the years 1926–1935), and in those of structuralists like Ferdinand de Saussure, Edward Sapir, and Leonard Bloomfield. Some structuralists (though not Sapir) rejected the idea of a cognitive or psycholinguistic function for the phoneme.[8][9]

Later, it was used and redefined in generative linguistics, most famously by Noam Chomsky and Morris Halle,[10] and remains central to many accounts of the development of modern phonology. As a theoretical concept or model, though, it has been supplemented and even replaced by others.[11]

Some linguists (such as Roman Jakobson and Morris Halle) proposed that phonemes may be further decomposable into features, such features being the true minimal constituents of language.[12] Features overlap each other in time, as do suprasegmental phonemes in oral language and many phonemes in sign languages. Features could be characterized in different ways: Jakobson and colleagues defined them in acoustic terms,[13] Chomsky and Halle used a predominantly articulatory basis, though retaining some acoustic features, while Ladefoged's system[14] is a purely articulatory system apart from the use of the acoustic term 'sibilant'.

In the description of some languages, the term chroneme has been used to indicate contrastive length or duration of phonemes. In languages in which tones are phonemic, the tone phonemes may be called tonemes. Though not all scholars working on such languages use these terms, they are by no means obsolete.

By analogy with the phoneme, linguists have proposed other sorts of underlying objects, giving them names with the suffix -eme, such as morpheme and grapheme. These are sometimes called emic units. The latter term was first used by Kenneth Pike, who also generalized the concepts of emic and etic description (from phonemic and phonetic respectively) to applications outside linguistics.[15]

Restrictions on occurrence

Languages do not generally allow words or syllables to be built of any arbitrary sequences of phonemes; there are phonotactic restrictions on which sequences of phonemes are possible and in which environments certain phonemes can occur. Phonemes that are significantly limited by such restrictions may be called restricted phonemes.

In English, examples of such restrictions include:

- /ŋ/, as in sing, occurs only at the end of a syllable, never at the beginning (in many other languages, such as Māori, Swahili, Tagalog, and Thai, /ŋ/ can appear word-initially).

- /h/ occurs only before vowels and at the beginning of a syllable, never at the end (a few languages, such as Arabic, or Romanian allow /h/ syllable-finally).

- In non-rhotic dialects, /ɹ/ can only occur immediately before a vowel, never before a consonant.

- /w/ and /j/ occur only before a vowel, never at the end of a syllable (except in interpretations where a word like boy is analyzed as /bɔj/).

Some phonotactic restrictions can alternatively be analyzed as cases of neutralization. See Neutralization and archiphonemes below, particularly the example of the occurrence of the three English nasals before stops.

Biuniqueness

Biuniqueness is a requirement of classic structuralist phonemics. It means that a given phone, wherever it occurs, must unambiguously be assigned to one and only one phoneme. In other words, the mapping between phones and phonemes is required to be many-to-one rather than many-to-many. The notion of biuniqueness was controversial among some pre-generative linguists and was prominently challenged by Morris Halle and Noam Chomsky in the late 1950s and early 1960s.

An example of the problems arising from the biuniqueness requirement is provided by the phenomenon of flapping in North American English. This may cause either /t/ or /d/ (in the appropriate environments) to be realized with the phone [ɾ] (an alveolar flap). For example, the same flap sound may be heard in the words hitting and bidding, although it is clearly intended to realize the phoneme /t/ in the first word and /d/ in the second. This appears to contradict biuniqueness.

For further discussion of such cases, see the next section.

Neutralization and archiphonemes

Phonemes that are contrastive in certain environments may not be contrastive in all environments. In the environments where they do not contrast, the contrast is said to be neutralized. In these positions it may become less clear which phoneme a given phone represents. Absolute neutralization is a phenomenon in which a segment of the underlying representation is not realized in any of its phonetic representations (surface forms). The term was introduced by Paul Kiparsky (1968), and contrasts with contextual neutralization where some phonemes are not contrastive in certain environments.[16] Some phonologists prefer not to specify a unique phoneme in such cases, since to do so would mean providing redundant or even arbitrary information – instead they use the technique of underspecification. An archiphoneme is an object sometimes used to represent an underspecified phoneme.

An example of neutralization is provided by the Russian vowels /a/ and /o/. These phonemes are contrasting in stressed syllables, but in unstressed syllables the contrast is lost, since both are reduced to the same sound, usually [ə] (for details, see vowel reduction in Russian). In order to assign such an instance of [ə] to one of the phonemes /a/ and /o/, it is necessary to consider morphological factors (such as which of the vowels occurs in other forms of the words, or which inflectional pattern is followed). In some cases even this may not provide an unambiguous answer. A description using the approach of underspecification would not attempt to assign [ə] to a specific phoneme in some or all of these cases, although it might be assigned to an archiphoneme, written something like //A//, which reflects the two neutralized phonemes in this position.

A somewhat different example is found in English, with the three nasal phonemes /m, n, ŋ/. In word-final position these all contrast, as shown by the minimal triplet sum /sʌm/, sun /sʌn/, sung /sʌŋ/. However, before a stop such as /p, t, k/ (provided there is no morpheme boundary between them), only one of the nasals is possible in any given position: /m/ before /p/, /n/ before /t/ or /d/, and /ŋ/ before /k/, as in limp, lint, link (/lɪmp/, /lɪnt/, /lɪŋk/). The nasals are therefore not contrastive in these environments, and according to some theorists this makes it inappropriate to assign the nasal phones heard here to any one of the phonemes (even though, in this case, the phonetic evidence is unambiguous). Instead they may analyze these phones as belonging to a single archiphoneme, written something like //N//, and state the underlying representations of limp, lint, link to be //lɪNp//, //lɪNt//, //lɪNk//.

This latter type of analysis is often associated with Nikolai Trubetzkoy of the Prague school. Archiphonemes are often notated with a capital letter within double virgules or pipes, as with the examples //A// and //N// given above. Other ways the second of these has been notated include |m-n-ŋ|, {m, n, ŋ} and //n*//.

Another example from English, but this time involving complete phonetic convergence as in the Russian example, is the flapping of /t/ and /d/ in some American English (described above under Biuniqueness). Here the words betting and bedding might both be pronounced [ˈbɛɾɪŋ]. Under the generative grammar theory of linguistics, if a speaker applies such flapping consistently, morphological evidence (the pronunciation of the related forms bet and bed, for example) would reveal which phoneme the flap represents, once it is known which morpheme is being used.[17] However, other theorists would prefer not to make such a determination, and simply assign the flap in both cases to a single archiphoneme, written (for example) //D//.

Further mergers in English are plosives after /s/, where /p, t, k/ conflate with /b, d, ɡ/, as suggested by the alternative spellings sketti and sghetti. That is, there is no particular reason to transcribe spin as /ˈspɪn/ rather than as /ˈsbɪn/, other than its historical development, and it might be less ambiguously transcribed //ˈsBɪn//.

Morphophonemes

A morphophoneme is a theoretical unit at a deeper level of abstraction than traditional phonemes, and is taken to be a unit from which morphemes are built up. A morphophoneme within a morpheme can be expressed in different ways in different allomorphs of that morpheme (according to morphophonological rules). For example, the English plural morpheme -s appearing in words such as cats and dogs can be considered to be a single morphophoneme, which might be transcribed (for example) //z// or |z|, and which is realized as phonemically /s/ after most voiceless consonants (as in cats) and as /z/ in other cases (as in dogs).

Numbers of phonemes in different languages

All known languages use only a small subset of the many possible sounds that the human speech organs can produce, and, because of allophony, the number of distinct phonemes will generally be smaller than the number of identifiably different sounds. Different languages vary considerably in the number of phonemes they have in their systems (although apparent variation may sometimes result from the different approaches taken by the linguists doing the analysis). The total phonemic inventory in languages varies from as few as 11 in Rotokas and Pirahã to as many as 141 in !Xũ.[18]

The number of phonemically distinct vowels can be as low as two, as in Ubykh and Arrernte. At the other extreme, the Bantu language Ngwe has 14 vowel qualities, 12 of which may occur long or short, making 26 oral vowels, plus six nasalized vowels, long and short, making a total of 38 vowels; while !Xóõ achieves 31 pure vowels, not counting its additional variation by vowel length, by varying the phonation. As regards consonant phonemes, Puinave and the Papuan language Tauade each have just seven, and Rotokas has only six. !Xóõ, on the other hand, has somewhere around 77, and Ubykh 81. The English language uses a rather large set of 13 to 21 vowel phonemes, including diphthongs, although its 22 to 26 consonants are close to average.

Some languages, such as French, have no phonemic tone or stress, while Cantonese and several of the Kam–Sui languages have nine tones, and one of the Kru languages, Wobé, has been claimed to have 14,[19] though this is disputed.[20]

The most common vowel system consists of the five vowels /i/, /e/, /a/, /o/, /u/. The most common consonants are /p/, /t/, /k/, /m/, /n/.[21] Relatively few languages lack any of these consonants, although it does happen: for example, Arabic lacks /p/, standard Hawaiian lacks /t/, Mohawk and Tlingit lack /p/ and /m/, Hupa lacks both /p/ and a simple /k/, colloquial Samoan lacks /t/ and /n/, while Rotokas and Quileute lack /m/ and /n/.

The non-uniqueness of phonemic solutions

During the development of phoneme theory in the mid-20th century phonologists were concerned not only with the procedures and principles involved in producing a phonemic analysis of the sounds of a given language, but also with the reality or uniqueness of the phonemic solution. Some writers took the position expressed by Kenneth Pike: "There is only one accurate phonemic analysis for a given set of data",[22] while others believed that different analyses, equally valid, could be made for the same data. Yuen Ren Chao (1934), in his article "The non-uniqueness of phonemic solutions of phonetic systems"[23] stated "given the sounds of a language, there are usually more than one possible way of reducing them to a set of phonemes, and these different systems or solutions are not simply correct or incorrect, but may be regarded only as being good or bad for various purposes". The linguist F.W. Householder referred to this argument within linguistics as "God's Truth vs. hocus-pocus".[24] Different analyses of the English vowel system may be used to illustrate this. The article English phonology states that "English has a particularly large number of vowel phonemes" and that "there are 20 vowel phonemes in Received Pronunciation, 14–16 in General American and 20–21 in Australian English"; the present article (§ Numbers of phonemes in different languages) says that "the English language uses a rather large set of 13 to 21 vowel phonemes". Although these figures are often quoted as a scientific fact, they actually reflect just one of many possible analyses, and later in the English Phonology article an alternative analysis is suggested in which some diphthongs and long vowels may be interpreted as comprising a short vowel linked to either /j/ or /w/. The transcription system for British English (RP) devised by the phonetician Geoff Lindsey and used in the CUBE pronunciation dictionary also treats diphthongs as composed of a vowel plus /j/ or /w/.[25] The fullest exposition of this approach is found in Trager and Smith (1951), where all long vowels and diphthongs ("complex nuclei") are made up of a short vowel combined with either /j/, /w/ or /h/ (plus /r/ for rhotic accents), each thus comprising two phonemes: they wrote "The conclusion is inescapable that the complex nuclei consist each of two phonemes, one of the short vowels followed by one of three glides".[26] The transcription for the vowel normally transcribed /aɪ/ would instead be /aj/, /aʊ/ would be /aw/ and /ɑː/ would be /ah/. The consequence of this approach is that English could theoretically have only seven vowel phonemes, which might be symbolized /i/, /e/, /a/, /o/, /u/, /ʌ/ and /ə/, or even six if schwa were treated as an allophone of /ʌ/ or of other short vowels, a figure that would put English much closer to the average number of vowel phonemes in other languages.[27]

In the same period there was disagreement about the correct basis for a phonemic analysis. The structuralist position was that the analysis should be made purely on the basis of the sound elements and their distribution, with no reference to extraneous factors such as grammar, morphology or the intuitions of the native speaker; this position is strongly associated with Leonard Bloomfield.[28] Zellig Harris claimed that it is possible to discover the phonemes of a language purely by examining the distribution of phonetic segments.[29] Referring to mentalistic definitions of the phoneme, Twaddell (1935) stated "Such a definition is invalid because (1) we have no right to guess about the linguistic workings of an inaccessible 'mind', and (2) we can secure no advantage from such guesses. The linguistic processes of the 'mind' as such are quite simply unobservable; and introspection about linguistic processes is notoriously a fire in a wooden stove."[30] This approach was opposed to that of Edward Sapir, who gave an important role to native speakers' intuitions about where a particular sound or groups of sounds fitted into a pattern. Using English [ŋ] as an example, Sapir argued that, despite the superficial appearance that this sound belongs to a group of nasal consonants, "no naive English-speaking person can be made to feel in his bones that it belongs to a single series with /m/ and /n/. ... It still feels like ŋg".[31] The theory of generative phonology which emerged in the 1960s explicitly rejected the Structuralist approach to phonology and favoured the mentalistic or cognitive view of Sapir.[32][33]

Correspondence between letters and phonemes

Phonemes are considered to be the basis for alphabetic writing systems. In such systems the written symbols (graphemes) represent, in principle, the phonemes of the language being written. This is most obviously the case when the alphabet was invented with a particular language in mind; for example, the Latin alphabet was devised for Classical Latin, and therefore the Latin of that period enjoyed a near one-to-one correspondence between phonemes and graphemes in most cases, though the devisers of the alphabet chose not to represent the phonemic effect of vowel length. However, because changes in the spoken language are often not accompanied by changes in the established orthography (as well as other reasons, including dialect differences, the effects of morphophonology on orthography, and the use of foreign spellings for some loanwords), the correspondence between spelling and pronunciation in a given language may be highly distorted; this is the case with English, for example.

The correspondence between symbols and phonemes in alphabetic writing systems is not necessarily a one-to-one correspondence. A phoneme might be represented by a combination of two or more letters (digraph, trigraph, etc.), like <sh> in English or <sch> in German (both representing phonemes /ʃ/). Also a single letter may represent two phonemes, as in English <x> representing /gz/ or /ks/. There may also exist spelling/pronunciation rules (such as those for the pronunciation of <c> in Italian) that further complicate the correspondence of letters to phonemes, although they need not affect the ability to predict the pronunciation from the spelling and vice versa, provided the rules are known.

In sign languages

Sign language phonemes are bundles of articulation features. Stokoe was the first scholar to describe the phonemic system of ASL. He identified the bundles tab (elements of location, from Latin tabula), dez (the handshape, from designator), sig (the motion, from signation). Some researchers also discern ori (orientation), facial expression or mouthing. Just as with spoken languages, when features are combined, they create phonemes. As in spoken languages, sign languages have minimal pairs which differ in only one phoneme. For instance, the ASL signs for father and mother differ minimally with respect to location while handshape and movement are identical; location is thus contrastive.

Stokoe's terminology and notation system are no longer used by researchers to describe the phonemes of sign languages; William Stokoe's research, while still considered seminal, has been found not to characterize American Sign Language or other sign languages sufficiently.[34] For instance, non-manual features are not included in Stokoe's classification. More sophisticated models of sign language phonology have since been proposed by Brentari,[35] Sandler,[36] and van der Kooij.[37]

Chereme

Cherology and chereme (from Ancient Greek: χείρ "hand") are synonyms of phonology and phoneme previously used in the study of sign languages. A chereme, as the basic unit of signed communication, is functionally and psychologically equivalent to the phonemes of oral languages, and has been replaced by that term in the academic literature. Cherology, as the study of cheremes in language, is thus equivalent to phonology. The terms are not in use anymore. Instead, the terms phonology and phoneme (or distinctive feature) are used to stress the linguistic similarities between signed and spoken languages.[38]

The terms were coined in 1960 by William Stokoe[39] at Gallaudet University to describe sign languages as true and full languages. Once a controversial idea, the position is now universally accepted in linguistics. Stokoe's terminology, however, has been largely abandoned.[40]

See also

- Alphabetic principle

- Alternation (linguistics)

- Complementary distribution

- Diaphoneme

- Diphone

- Emic and etic

- Free variation

- Initial-stress-derived noun

- International Phonetic Alphabet

- Minimal pair

- Morphophonology

- Phone

- Phonemic orthography

- Phonology

- Phonological change

- Phonotactics

- Sphoṭa

- Toneme

- Triphone

- Viseme

Notes

- Wells, John (1982). Accents of English. Cambridge University Press. p. 179.

- Handspeak. "Minimal pairs in sign language phonology". www.handspeak.com. Archived from the original on 14 February 2017. Retrieved 13 February 2017.

- Wells 1982, p. 44.

- Wells 1982, p. 48.

- Liddell, H.G. & Scott, R. (1940). A Greek-English Lexicon. revised and augmented throughout by Sir Henry Stuart Jones. with the assistance of. Roderick McKenzie. Oxford: Clarendon Press.

- Jones 1957.

- Jones, D. (1917), The phonetic structure of the Sechuana language, Transactions of the Philological Society 1917-20, pp. 99–106

- Twaddell 1935.

- Harris 1951.

- Chomsky & Halle 1968.

- Clark & Yallop 1995, chpt. 11.

- Jakobson & Halle 1968.

- Jakobson, Fant & Halle 1952.

- Ladefoged 2006, pp. 268–276.

- Pike 1967.

- Kiparsky, P., Linguistic universals and linguistic change. In: E. Bach & R.T. Harms (eds.), Universals in linguistic theory, 1968, New York: Holt, Rinehart and Winston (pp. 170–202)

- Dinnsen, Daniel (1985). "A Re-Examination of Phonological Neutralization". Journal of Linguistics. 21 (2): 265–79. doi:10.1017/s0022226700010276. JSTOR 4175789.

- Crystal 2010, p. 173.

- Bearth, Thomas; Link, Christa (1980). "The tone puzzle of Wobe". Studies in African Linguistics. 11 (2): 147–207.

- Singler, John Victor (1984). "On the underlying representation of contour tones in Wobe". Studies in African Linguistics. 15 (1): 59–75.

- Moran, Steven; McCloy, Daniel; Wright, Richard, eds. (2014). "PHOIBLE Online". Leipzig: Max Planck Institute for Evolutionary Anthropology. Retrieved 5 January 2019.

- Pike, K.L. (1947) Phonemics, University of Michigan Press, p. 64

- Chao, Yuen Ren (1934). "The non-uniqueness of phonemic solutions of phonetic systems". Academia Sinica. IV.4: 363–97.

- Householder, F.W. (1952). "Review of Methods in structural linguistics by Zellig S. Harris". International Journal of American Linguistics. 18: 260–8. doi:10.1086/464181.

- Lindsey, Geoff. "The CUBE searchable dictionary". English Speech Services. Archived from the original on 31 December 2017. Retrieved 31 December 2017.

- Trager, G.; Smith, H. (1951). An Outline of English Structure. American Council of Learned Societies. p. 20. Retrieved 30 December 2017.

- Roach, Peter (2009). English Phonetics and Phonology (4th ed.). Cambridge University Press. pp. 99–100. ISBN 978-0-521-71740-3.

- Bloomfield, Leonard (1933). Language. Henry Holt.

- Harris, Zellig (1951). Methods in Structural Linguistics. Chicago University Press. p. 5.

- Twaddell, W.F. (1935). "On defining the phoneme". Language. 11 (1): 5–62. doi:10.2307/522070. JSTOR 522070.

- Sapir, Edward (1925). "Sound patterns in language". Language. 1 (37): 37–51. doi:10.2307/409004. JSTOR 409004.

- Chomsky, Noam (1964). Current Issues in Linguistic Theory. Mouton.

- Chomsky, Noam; Halle, Morris (1968). The Sound Pattern of English. Harper and Row.

- Clayton, Valli; Lucas, Ceil (2000). Linguistics of American Sign Language : an introduction (3rd ed.). Washington, D.C.: Gallaudet University Press. ISBN 9781563680977. OCLC 57352333.

- Brentari, Diane (1998). A prosodic model of sign language phonology. MIT Press.

- Sandler, Wendy (1989). Phonological representation of the sign: linearity and nonlinearity in American Sign Language. Foris.

- Kooij, Els van der (2002). Phonological categories in Sign Language of the Netherlands. The role of phonetic implementation and iconicity. PhD dissertation, Leiden University.

- Bross, Fabian. 2015. "Chereme", in In: Hall, T. A. Pompino-Marschall, B. (ed.): Dictionaries of Linguistics and Communication Science (Wörterbücher zur Sprach- und Kommunikationswissenschaft, WSK). Volume: Phonetics and Phonology. Berlin, New York: Mouton de Gruyter.

- Stokoe, William C. 1960. Sign Language Structure: An Outline of the Visual Communication Systems of the American Deaf, Studies in linguistics: Occasional papers (No. 8). Buffalo: Dept. of Anthropology and Linguistics, University of Buffalo.

- Seegmiller, 2006. "Stokoe, William (1919–2000)", in Encyclopedia of Language and Linguistics, 2nd ed.

Bibliography

- Chomsky, N.; Halle, M. (1968), The Sound Pattern of English, Harper and Row, OCLC 317361

- Clark, J.; Yallop, C. (1995), An Introduction to Phonetics and Phonology (2 ed.), Blackwell, ISBN 978-0-631-19452-1

- Crystal, D. (1997), The Cambridge Encyclopedia of Language (2 ed.), Cambridge, ISBN 978-0-521-55967-6

- Crystal, D. (2010), The Cambridge Encyclopedia of Language (3 ed.), Cambridge, ISBN 978-0-521-73650-3

- Gimson, A.C. (2008), Cruttenden, A. (ed.), The Pronunciation of English (7 ed.), Hodder, ISBN 978-0-340-95877-3

- Harris, Z. (1951), Methods in Structural Linguistics, Chicago University Press, OCLC 2232282

- Jakobson, R.; Fant, G.; Halle, M. (1952), Preliminaries to Speech Analysis, MIT, OCLC 6492928

- Jakobson, R.; Halle, M. (1968), Phonology in Relation to Phonetics, in Malmberg, B. (ed) Manual of Phonetics, North-Holland, OCLC 13223685

- Jones, Daniel (1957), The History and Meaning of the Term 'Phoneme', Le Maître Phonétique, supplement (reprinted in E. Fudge (ed) Phonology, Penguin), JSTOR 44705495, OCLC 4550377

- Ladefoged, P. (2006), A Course in Phonetics (5 ed.), Thomson, ISBN 978-1-4282-3126-9

- Pike, K.L. (1967), Language in Relation to a Unified Theory of Human Behavior, Mouton, OCLC 308042

- Swadesh, M. (1934), "The Phonemic Principle", Language, 10 (2): 117–129, doi:10.2307/409603, JSTOR 409603

- Twaddell, W.F. (1935), On Defining the Phoneme, Linguistic Society of America (reprinted in Joos, M. Readings in Linguistics, 1957), OCLC 1657452

- Wells, J.C. (1982), Accents of English, Cambridge, ISBN 0-521-29719-2