Backdoor (computing)

A backdoor is a typically covert method of bypassing normal authentication or encryption in a computer, product, embedded device (e.g. a home router), or its embodiment (e.g. part of a cryptosystem, algorithm, chipset, or even a "homunculus computer" —a tiny computer-within-a-computer such as that found in Intel's AMT technology).[1][2] Backdoors are most often used for securing remote access to a computer, or obtaining access to plaintext in cryptographic systems. From there it may be used to gain access to privileged information like passwords, corrupt or delete data on hard drives, or transfer information within autoschediastic networks.

| This article is part of a series on |

| Information security |

|---|

| Related security categories |

| Threats |

|

| Defenses |

A backdoor may take the form of a hidden part of a program,[3] a separate program (e.g. Back Orifice may subvert the system through a rootkit), code in the firmware of the hardware,[4] or parts of an operating system such as Windows.[5][6][7] Trojan horses can be used to create vulnerabilities in a device. A Trojan horse may appear to be an entirely legitimate program, but when executed, it triggers an activity that may install a backdoor.[8] Although some are secretly installed, other backdoors are deliberate and widely known. These kinds of backdoors have "legitimate" uses such as providing the manufacturer with a way to restore user passwords.

Many systems that store information within the cloud fail to create accurate security measures. If many systems are connected within the cloud, hackers can gain access to all other platforms through the most vulnerable system.[9]

Default passwords (or other default credentials) can function as backdoors if they are not changed by the user. Some debugging features can also act as backdoors if they are not removed in the release version.[10]

In 1993, the United States government attempted to deploy an encryption system, the Clipper chip, with an explicit backdoor for law enforcement and national security access. The chip was unsuccessful.[11]

Overview

The threat of backdoors surfaced when multiuser and networked operating systems became widely adopted. Petersen and Turn discussed computer subversion in a paper published in the proceedings of the 1967 AFIPS Conference.[12] They noted a class of active infiltration attacks that use "trapdoor" entry points into the system to bypass security facilities and permit direct access to data. The use of the word trapdoor here clearly coincides with more recent definitions of a backdoor. However, since the advent of public key cryptography the term trapdoor has acquired a different meaning (see trapdoor function), and thus the term "backdoor" is now preferred, only after the term trapdoor went out of use. More generally, such security breaches were discussed at length in a RAND Corporation task force report published under ARPA sponsorship by J.P. Anderson and D.J. Edwards in 1970.[13]

A backdoor in a login system might take the form of a hard coded user and password combination which gives access to the system. An example of this sort of backdoor was used as a plot device in the 1983 film WarGames, in which the architect of the "WOPR" computer system had inserted a hardcoded password which gave the user access to the system, and to undocumented parts of the system (in particular, a video game-like simulation mode and direct interaction with the artificial intelligence).

Although the number of backdoors in systems using proprietary software (software whose source code is not publicly available) is not widely credited, they are nevertheless frequently exposed. Programmers have even succeeded in secretly installing large amounts of benign code as Easter eggs in programs, although such cases may involve official forbearance, if not actual permission.

A countermeasure against backdoors is free software, where the source code can be examined for potential backdoors and it is as such usually harder to ″hide″ a backdoor in there. Combined with reproducible builds one can also be sure that a provided binary corresponds to the publicly available source code.

Politics and attribution

There are a number of cloak and dagger considerations that come into play when apportioning responsibility.

Covert backdoors sometimes masquerade as inadvertent defects (bugs) for reasons of plausible deniability. In some cases, these might begin life as an actual bug (inadvertent error), which, once discovered are then deliberately left unfixed and undisclosed, whether by a rogue employee for personal advantage, or with C-level executive awareness and oversight.

It is also possible for an entirely above-board corporation's technology base to be covertly and untraceably tainted by external agents (hackers), though this level of sophistication is thought to exist mainly at the level of nation state actors. For example, if a photomask obtained from a photomask supplier differs in a few gates from its photomask specification, a chip manufacturer would be hard-pressed to detect this if otherwise functionally silent; a covert rootkit running in the photomask etching equipment could enact this discrepancy unbeknown to the photomask manufacturer, either, and by such means, one backdoor potentially leads to another. (This hypothetical scenario is essentially a silicon version of the undetectable compiler backdoor, discussed below.)

In general terms, the long dependency-chains in the modern, highly specialized technological economy and innumerable human-elements process control-points make it difficult to conclusively pinpoint responsibility at such time as a covert backdoor becomes unveiled.

Even direct admissions of responsibility must be scrutinized carefully if the confessing party is beholden to other powerful interests.

Examples

Worms

Many computer worms, such as Sobig and Mydoom, install a backdoor on the affected computer (generally a PC on broadband running Microsoft Windows and Microsoft Outlook). Such backdoors appear to be installed so that spammers can send junk e-mail from the infected machines. Others, such as the Sony/BMG rootkit, placed secretly on millions of music CDs through late 2005, are intended as DRM measures—and, in that case, as data-gathering agents, since both surreptitious programs they installed routinely contacted central servers.

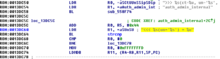

A sophisticated attempt to plant a backdoor in the Linux kernel, exposed in November 2003, added a small and subtle code change by subverting the revision control system.[14] In this case, a two-line change appeared to check root access permissions of a caller to the sys_wait4 function, but because it used assignment = instead of equality checking ==, it actually granted permissions to the system. This difference is easily overlooked, and could even be interpreted as an accidental typographical error, rather than an intentional attack.[15]

In January 2014, a backdoor was discovered in certain Samsung Android products, like the Galaxy devices. The Samsung proprietary Android versions are fitted with a backdoor that provides remote access to the data stored on the device. In particular, the Samsung Android software that is in charge of handling the communications with the modem, using the Samsung IPC protocol, implements a class of requests known as remote file server (RFS) commands, that allows the backdoor operator to perform via modem remote I/O operations on the device hard disk or other storage. As the modem is running Samsung proprietary Android software, it is likely that it offers over-the-air remote control that could then be used to issue the RFS commands and thus to access the file system on the device.[16]

Object code backdoors

Harder to detect backdoors involve modifying object code, rather than source code – object code is much harder to inspect, as it is designed to be machine-readable, not human-readable. These backdoors can be inserted either directly in the on-disk object code, or inserted at some point during compilation, assembly linking, or loading – in the latter case the backdoor never appears on disk, only in memory. Object code backdoors are difficult to detect by inspection of the object code, but are easily detected by simply checking for changes (differences), notably in length or in checksum, and in some cases can be detected or analyzed by disassembling the object code. Further, object code backdoors can be removed (assuming source code is available) by simply recompiling from source on a trusted system.

Thus for such backdoors to avoid detection, all extant copies of a binary must be subverted, and any validation checksums must also be compromised, and source must be unavailable, to prevent recompilation. Alternatively, these other tools (length checks, diff, checksumming, disassemblers) can themselves be compromised to conceal the backdoor, for example detecting that the subverted binary is being checksummed and returning the expected value, not the actual value. To conceal these further subversions, the tools must also conceal the changes in themselves – for example, a subverted checksummer must also detect if it is checksumming itself (or other subverted tools) and return false values. This leads to extensive changes in the system and tools being needed to conceal a single change.

Because object code can be regenerated by recompiling (reassembling, relinking) the original source code, making a persistent object code backdoor (without modifying source code) requires subverting the compiler itself – so that when it detects that it is compiling the program under attack it inserts the backdoor – or alternatively the assembler, linker, or loader. As this requires subverting the compiler, this in turn can be fixed by recompiling the compiler, removing the backdoor insertion code. This defense can in turn be subverted by putting a source meta-backdoor in the compiler, so that when it detects that it is compiling itself it then inserts this meta-backdoor generator, together with the original backdoor generator for the original program under attack. After this is done, the source meta-backdoor can be removed, and the compiler recompiled from original source with the compromised compiler executable: the backdoor has been bootstrapped. This attack dates to Karger & Schell (1974), and was popularized in Thompson's 1984 article, entitled "Reflections on Trusting Trust";[17] it is hence colloquially known as the "Trusting Trust" attack. See compiler backdoors, below, for details. Analogous attacks can target lower levels of the system, such as the operating system, and can be inserted during the system booting process; these are also mentioned in Karger & Schell (1974), and now exist in the form of boot sector viruses.[18]

Asymmetric backdoors

A traditional backdoor is a symmetric backdoor: anyone that finds the backdoor can in turn use it. The notion of an asymmetric backdoor was introduced by Adam Young and Moti Yung in the Proceedings of Advances in Cryptology: Crypto '96. An asymmetric backdoor can only be used by the attacker who plants it, even if the full implementation of the backdoor becomes public (e.g., via publishing, being discovered and disclosed by reverse engineering, etc.). Also, it is computationally intractable to detect the presence of an asymmetric backdoor under black-box queries. This class of attacks have been termed kleptography; they can be carried out in software, hardware (for example, smartcards), or a combination of the two. The theory of asymmetric backdoors is part of a larger field now called cryptovirology. Notably, NSA inserted a kleptographic backdoor into the Dual EC DRBG standard.[4][19][20]

There exists an experimental asymmetric backdoor in RSA key generation. This OpenSSL RSA backdoor, designed by Young and Yung, utilizes a twisted pair of elliptic curves, and has been made available.[21]

Compiler backdoors

A sophisticated form of black box backdoor is a compiler backdoor, where not only is a compiler subverted (to insert a backdoor in some other program, such as a login program), but it is further modified to detect when it is compiling itself and then inserts both the backdoor insertion code (targeting the other program) and the code-modifying self-compilation, like the mechanism through which retroviruses infect their host. This can be done by modifying the source code, and the resulting compromised compiler (object code) can compile the original (unmodified) source code and insert itself: the exploit has been boot-strapped.

This attack was originally presented in Karger & Schell (1974, p. 52, section 3.4.5: "Trap Door Insertion"), which was a United States Air Force security analysis of Multics, where they described such an attack on a PL/I compiler, and call it a "compiler trap door"; they also mention a variant where the system initialization code is modified to insert a backdoor during booting, as this is complex and poorly understood, and call it an "initialization trapdoor"; this is now known as a boot sector virus.[18]

This attack was then actually implemented and popularized by Ken Thompson, in his Turing Award acceptance speech in 1983 (published 1984), "Reflections on Trusting Trust",[17] which points out that trust is relative, and the only software one can truly trust is code where every step of the bootstrapping has been inspected. This backdoor mechanism is based on the fact that people only review source (human-written) code, and not compiled machine code (object code). A program called a compiler is used to create the second from the first, and the compiler is usually trusted to do an honest job.

Thompson's paper describes a modified version of the Unix C compiler that would:

- Put an invisible backdoor in the Unix login command when it noticed that the login program was being compiled, and as a twist

- Also add this feature undetectably to future compiler versions upon their compilation as well.

Because the compiler itself was a compiled program, users would be extremely unlikely to notice the machine code instructions that performed these tasks. (Because of the second task, the compiler's source code would appear "clean".) What's worse, in Thompson's proof of concept implementation, the subverted compiler also subverted the analysis program (the disassembler), so that anyone who examined the binaries in the usual way would not actually see the real code that was running, but something else instead.

An updated analysis of the original exploit is given in Karger & Schell (2002, Section 3.2.4: Compiler trap doors), and a historical overview and survey of the literature is given in Wheeler (2009, Section 2: Background and related work).

Occurrences

Thompson's version was, officially, never released into the wild. It is believed, however, that a version was distributed to BBN and at least one use of the backdoor was recorded.[22] There are scattered anecdotal reports of such backdoors in subsequent years.

In August 2009, an attack of this kind was discovered by Sophos labs. The W32/Induc-A virus infected the program compiler for Delphi, a Windows programming language. The virus introduced its own code to the compilation of new Delphi programs, allowing it to infect and propagate to many systems, without the knowledge of the software programmer. An attack that propagates by building its own Trojan horse can be especially hard to discover. It is believed that the Induc-A virus had been propagating for at least a year before it was discovered.[23]

Countermeasures

Once a system has been compromised with a backdoor or Trojan horse, such as the Trusting Trust compiler, it is very hard for the "rightful" user to regain control of the system – typically one should rebuild a clean system and transfer data (but not executables) over. However, several practical weaknesses in the Trusting Trust scheme have been suggested. For example, a sufficiently motivated user could painstakingly review the machine code of the untrusted compiler before using it. As mentioned above, there are ways to hide the Trojan horse, such as subverting the disassembler; but there are ways to counter that defense, too, such as writing your own disassembler from scratch.

A generic method to counter trusting trust attacks is called Diverse Double-Compiling (DDC). The method requires a different compiler and the source code of the compiler-under-test. That source, compiled with both compilers, results in two different stage-1 compilers, which however should have the same behavior. Thus the same source compiled with both stage-1 compilers must then result in two identical stage-2 compilers. A formal proof is given that the latter comparison guarantees that the purported source code and executable of the compiler-under-test correspond, under some assumptions. This method was applied by its author to verify that the C compiler of the GCC suite (v. 3.0.4) contained no trojan, using icc (v. 11.0) as the different compiler.[24]

In practice such verifications are not done by end users, except in extreme circumstances of intrusion detection and analysis, due to the rarity of such sophisticated attacks, and because programs are typically distributed in binary form. Removing backdoors (including compiler backdoors) is typically done by simply rebuilding a clean system. However, the sophisticated verifications are of interest to operating system vendors, to ensure that they are not distributing a compromised system, and in high-security settings, where such attacks are a realistic concern.

List of known backdoors

- Back Orifice was created in 1998 by hackers from Cult of the Dead Cow group as a remote administration tool. It allowed Windows computers to be remotely controlled over a network and parodied the name of Microsoft's BackOffice.

- The Dual EC DRBG cryptographically secure pseudorandom number generator was revealed in 2013 to possibly have a kleptographic backdoor deliberately inserted by NSA, who also had the private key to the backdoor.[4][20]

- Several backdoors in the unlicensed copies of WordPress plug-ins were discovered in March 2014.[25] They were inserted as obfuscated JavaScript code and silently created, for example, an admin account in the website database. A similar scheme was later exposed in the Joomla plugin.[26]

- Borland Interbase versions 4.0 through 6.0 had a hard-coded backdoor, put there by the developers. The server code contains a compiled-in backdoor account (username: politically, password: correct), which could be accessed over a network connection; a user logging in with this backdoor account could take full control over all Interbase databases. The backdoor was detected in 2001 and a patch was released.[27][28]

- Juniper Networks backdoor inserted in the year 2008 into the versions of firmware ScreenOS from 6.2.0r15 to 6.2.0r18 and from 6.3.0r12 to 6.3.0r20[29] that gives any user administrative access when using a special master password.[30]

- Several backdoors were discovered in C-DATA Optical Line Termination (OLT) devices.[31] Researchers released the findings without notifying C-DATA because they believe the backdoors were intentionally placed by the vendor.[32]

See also

- Backdoor:Win32.Hupigon

- Backdoor.Win32.Seed

- Hardware backdoor

- Titanium (malware)

- Michigan Collegiate Cyber Defense Network 2020, Backdoor left in blue-team systems

References

- Eckersley, Peter; Portnoy, Erica (8 May 2017). "Intel's Management Engine is a security hazard, and users need a way to disable it". www.eff.org. EFF. Retrieved 15 May 2017.

- Hoffman, Chris. "Intel Management Engine, Explained: The Tiny Computer Inside Your CPU". How-To Geek. Retrieved July 13, 2018.

- Chris Wysopal, Chris Eng. "Static Detection of Application Backdoors" (PDF). Veracode. Retrieved 2015-03-14.

- "How a Crypto 'Backdoor' Pitted the Tech World Against the NSA". Wired. 2013-09-24. Retrieved 5 April 2018.

- Ashok, India (21 June 2017). "Hackers using NSA malware DoublePulsar to infect Windows PCs with Monero mining Trojan". International Business Times UK. Retrieved 1 July 2017.

- "Microsoft Back Doors". GNU Operating System. Retrieved 1 July 2017.

- "NSA backdoor detected on >55,000 Windows boxes can now be remotely removed". Ars Technica. 2017-04-25. Retrieved 1 July 2017.

- "Backdoors and Trojan Horses: By the Internet Security Systems' X-Force". Information Security Technical Report. 6 (4): 31–57. 2001-12-01. doi:10.1016/S1363-4127(01)00405-8. ISSN 1363-4127.

- Linthicum, David. "Caution! The cloud's backdoor is your datacenter". InfoWorld. Retrieved 2018-11-29.

- "Bogus story: no Chinese backdoor in military chip". blog.erratasec.com. Retrieved 5 April 2018.

- https://www.eff.org/deeplinks/2015/04/clipper-chips-birthday-looking-back-22-years-key-escrow-failures Clipper a failure.

- H.E. Petersen, R. Turn. "System Implications of Information Privacy". Proceedings of the AFIPS Spring Joint Computer Conference, vol. 30, pages 291–300. AFIPS Press: 1967.

- Security Controls for Computer Systems, Technical Report R-609, WH Ware, ed, Feb 1970, RAND Corp.

- Larry McVoy (November 5, 2003) Linux-Kernel Archive: Re: BK2CVS problem Archived 2003-11-27 at the Wayback Machine. ussg.iu.edu

- Thwarted Linux backdoor hints at smarter hacks; Kevin Poulsen; SecurityFocus, 6 November 2003.

- "SamsungGalaxyBackdoor - Replicant". redmine.replicant.us. Retrieved 5 April 2018.

- Thompson, Ken (August 1984). "Reflections on Trusting Trust" (PDF). Communications of the ACM. 27 (8): 761–763. doi:10.1145/358198.358210.

- Karger & Schell 2002.

- "The strange connection between the NSA and an Ontario tech firm". Retrieved 5 April 2018 – via The Globe and Mail.

- Perlroth, Nicole; Larson, Jeff; Shane, Scott (5 September 2013). "N.S.A. Able to Foil Basic Safeguards of Privacy on Web". The New York Times. Retrieved 5 April 2018.

- "Malicious Cryptography: Cryptovirology and Kleptography". www.cryptovirology.com. Retrieved 5 April 2018.

- Jargon File entry for "backdoor" at catb.org, describes Thompson compiler hack

- Compile-a-virus — W32/Induc-A Sophos labs on the discovery of the Induc-A virus

- Wheeler 2009.

- "Unmasking "Free" Premium WordPress Plugins". Sucuri Blog. 2014-03-26. Retrieved 3 March 2015.

- Sinegubko, Denis (2014-04-23). "Joomla Plugin Constructor Backdoor". Securi. Retrieved 13 March 2015.

- "Vulnerability Note VU#247371". Vulnerability Note Database. Retrieved 13 March 2015.

- "Interbase Server Contains Compiled-in Back Door Account". CERT. Retrieved 13 March 2015.

- "Researchers confirm backdoor password in Juniper firewall code". Ars Technica. 2015-12-21. Retrieved 2016-01-16.

- "Zagrożenia tygodnia 2015-W52 - Spece.IT". Spece.IT (in Polish). 2015-12-23. Retrieved 2016-01-16.

- https://pierrekim.github.io/blog/2020-07-07-cdata-olt-0day-vulnerabilities.html

- https://www.zdnet.com/article/backdoor-accounts-discovered-in-29-ftth-devices-from-chinese-vendor-c-data/

Further reading

- Karger, Paul A.; Schell, Roger R. (June 1974). Multics Security Evaluation: Vulnerability Analysis (PDF). Vol II.CS1 maint: ref=harv (link)

- Karger, Paul A.; Schell, Roger R. (September 18, 2002). Thirty Years Later: Lessons from the Multics Security Evaluation (PDF). Computer Security Applications Conference, 2002. Proceedings. 18th Annual. IEEE. pp. 119–126. doi:10.1109/CSAC.2002.1176285. Retrieved 2014-11-08.CS1 maint: ref=harv (link)

- Wheeler, David A. (7 December 2009). Fully Countering Trusting Trust through Diverse Double-Compiling (Ph.D.). Fairfax, VA: George Mason University. Retrieved 2014-11-09.CS1 maint: ref=harv (link)

External links

- Finding and Removing Backdoors

- Three Archaic Backdoor Trojan Programs That Still Serve Great Pranks

- Backdoors removal — List of backdoors and their removal instructions.

- FAQ Farm's Backdoors FAQ: wiki question and answer forum

- List of backdoors and Removal —