Dynamic time warping

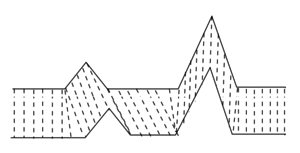

In time series analysis, dynamic time warping (DTW) is one of the algorithms for measuring similarity between two temporal sequences, which may vary in speed. For instance, similarities in walking could be detected using DTW, even if one person was walking faster than the other, or if there were accelerations and decelerations during the course of an observation. DTW has been applied to temporal sequences of video, audio, and graphics data — indeed, any data that can be turned into a linear sequence can be analyzed with DTW. A well known application has been automatic speech recognition, to cope with different speaking speeds. Other applications include speaker recognition and online signature recognition. It can also be used in partial shape matching application.

In general, DTW is a method that calculates an optimal match between two given sequences (e.g. time series) with certain restriction and rules:

- Every index from the first sequence must be matched with one or more indices from the other sequence, and vice versa

- The first index from the first sequence must be matched with the first index from the other sequence (but it does not have to be its only match)

- The last index from the first sequence must be matched with the last index from the other sequence (but it does not have to be its only match)

- The mapping of the indices from the first sequence to indices from the other sequence must be monotonically increasing, and vice versa, i.e. if are indices from the first sequence, then there must not be two indices in the other sequence, such that index is matched with index and index is matched with index , and vice versa

The optimal match is denoted by the match that satisfies all the restrictions and the rules and that has the minimal cost, where the cost is computed as the sum of absolute differences, for each matched pair of indices, between their values.

The sequences are "warped" non-linearly in the time dimension to determine a measure of their similarity independent of certain non-linear variations in the time dimension. This sequence alignment method is often used in time series classification. Although DTW measures a distance-like quantity between two given sequences, it doesn't guarantee the triangle inequality to hold.

In addition to a similarity measure between the two sequences, a so called "warping path" is produced, by warping according to this path the two signals may be aligned in time. The signal with an original set of points X(original), Y(original) is transformed to X(warped), Y(warped). This finds applications in genetic sequence and audio synchronisation. In a related technique sequences of varying speed may be averaged using this technique see the average sequence section.

This is conceptually very similar to the Needleman–Wunsch algorithm.

Implementation

This example illustrates the implementation of the dynamic time warping algorithm when the two sequences s and t are strings of discrete symbols. For two symbols x and y, d(x, y) is a distance between the symbols, e.g. d(x, y) = .

int DTWDistance(s: array [1..n], t: array [1..m]) {

DTW := array [0..n, 0..m]

for i := 0 to n

for j := 0 to m

DTW[i, j] := infinity

DTW[0, 0] := 0

for i := 1 to n

for j := 1 to m

cost := d(s[i], t[j])

DTW[i, j] := cost + minimum(DTW[i-1, j ], // insertion

DTW[i , j-1], // deletion

DTW[i-1, j-1]) // match

return DTW[n, m]

}

where DTW[i, j] is the distance between s[1:i] and t[1:j] with the best alignment.

We sometimes want to add a locality constraint. That is, we require that if s[i] is matched with t[j], then is no larger than w, a window parameter.

We can easily modify the above algorithm to add a locality constraint (differences marked). However, the above given modification works only if is no larger than w, i.e. the end point is within the window length from diagonal. In order to make the algorithm work, the window parameter w must be adapted so that (see the line marked with (*) in the code).

int DTWDistance(s: array [1..n], t: array [1..m], w: int) {

DTW := array [0..n, 0..m]

w := max(w, abs(n-m)) // adapt window size (*)

for i := 0 to n

for j:= 0 to m

DTW[i, j] := infinity

DTW[0, 0] := 0

for i := 1 to n

for j := max(1, i-w) to min(m, i+w)

DTW[i, j] := 0

for i := 1 to n

for j := max(1, i-w) to min(m, i+w)

cost := d(s[i], t[j])

DTW[i, j] := cost + minimum(DTW[i-1, j ], // insertion

DTW[i , j-1], // deletion

DTW[i-1, j-1]) // match

return DTW[n, m]

}

Warping properties

The DTW algorithm produces a discrete matching between existing elements of one series to another. In other words, it does not allow time-scaling of segments within the sequence. Other methods allow continuous warping. For example, Correlation Optimized Warping (COW) divides the sequence into uniform segments that are scaled in time using linear interpolation, to produce the best matching warping. The segment scaling causes potential creation of new elements, by time-scaling segments either down or up, and thus produces a more sensitive warping than DTW's discrete matching of raw elements.

Complexity

The time complexity of DTW algorithm is , where and are the lengths of the two input sequences. Assuming that , the time complexity can be said to be . The same is true for space complexity. The 50 years old quadratic time bound was recently broken, an implementation due to Gold and Sharir enables computing DTW in time and space.[2]

Fast computation

Fast techniques for computing DTW include PrunedDTW,[3] SparseDTW,[4] FastDTW,[5] and the MultiscaleDTW.[6][7] A common task, retrieval of similar time series, can be accelerated by using lower bounds such as LB_Keogh[8] or LB_Improved.[9] In a survey, Wang et al. reported slightly better results with the LB_Improved lower bound than the LB_Keogh bound, and found that other techniques were inefficient.[10]

Average sequence

Averaging for dynamic time warping is the problem of finding an average sequence for a set of sequences. NLAAF[11] is an exact method to average two sequences using DTW. For more than two sequences, the problem is related to the one of the multiple alignment and requires heuristics. DBA[12] is currently a reference method to average a set of sequences consistently with DTW. COMASA[13] efficiently randomizes the search for the average sequence, using DBA as a local optimization process.

Supervised learning

A nearest-neighbour classifier can achieve state-of-the-art performance when using dynamic time warping as a distance measure.[14]

Alternative approaches

In functional data analysis, time series are regarded as discretizations of smooth (differentiable) functions of time. By viewing the observed samples at smooth functions, one can utilize continuous mathematics for analyzing data.[15] Smoothness and monotonicity of time warp functions may be obtained for instance by integrating a time-varying radial basis function, thus being a one-dimensional diffeomorphism[16]. Optimal nonlinear time warping functions are computed by minimizing a measure of distance of the set of functions to their warped average. Roughness penalty terms for the warping functions may be added, e.g., by constraining the size of their curvature. The resultant warping functions are smooth, which facilitates further processing. This approach has been successfully applied to analyze patterns and variability of speech movements.[17][18]

Another related approach are hidden Markov models (HMM) and it has been shown that the Viterbi algorithm used to search for the most likely path through the HMM is equivalent to stochastic DTW.[19][20][21]

DTW and related warping methods are typically used as pre- or post-processing steps in data analyses. If the observed sequences contain both random variation in both their values (shape of observed sequences) and (random) temporal misalignment, the warping may overfit to noise leading to biased results. A simultaneous model formulation with random variation in both values (vertical) and time-parametrization (horizontal) is an example of a nonlinear mixed-effects model.[22] In human movement analysis, simultaneous nonlinear mixed-effects modeling has been shown to produce superior results compared to DTW.[23]

Open-source software

- The lbimproved C++ library implements Fast Nearest-Neighbor Retrieval algorithms under the GNU General Public License (GPL). It also provides a C++ implementation of dynamic time warping, as well as various lower bounds.

- The FastDTW library is a Java implementation of DTW and a FastDTW implementation that provides optimal or near-optimal alignments with an O(N) time and memory complexity, in contrast to the O(N2) requirement for the standard DTW algorithm. FastDTW uses a multilevel approach that recursively projects a solution from a coarser resolution and refines the projected solution.

- FastDTW fork (Java) published to Maven Central.

- The DTW suite provides Python (dtw-python) and R packages (dtw) with a comprehensive coverage of the DTW algorithm family members, including a variety of recursion rules (also called step patterns), constraints, and substring matching.

- The mlpy Python library implements DTW.

- The pydtw Python library implements the Manhattan and Euclidean flavoured DTW measures including the LB_Keogh lower bounds.

- The cudadtw C++/CUDA library implements subsequence alignment of Euclidean-flavoured DTW and z-normalized Euclidean distance similar to the popular UCR-Suite on CUDA-enabled accelerators.

- The JavaML machine learning library implements DTW.

- The ndtw C# library implements DTW with various options.

- Sketch-a-Char uses Greedy DTW (implemented in JavaScript) as part of LaTeX symbol classifier program.

- The MatchBox implements DTW to match mel-frequency cepstral coefficients of audio signals.

- Sequence averaging: a GPL Java implementation of DBA.[12]

- The Gesture Recognition Toolkit|GRT C++ real-time gesture-recognition toolkit implements DTW.

- The PyHubs software package implements DTW and nearest-neighbour classifiers, as well as their extensions (hubness-aware classifiers).

- The simpledtw Python library implements the classic O(NM) Dynamic Programming algorithm and bases on Numpy. It supports values of any dimension, as well as using custom norm functions for the distances. It is licensed under the MIT license.

- The tslearn Python library implements DTW in the time-series context.

- The cuTWED CUDA Python library implements a state of the art improved Time Warp Edit Distance using only linear memory with phenomenal speedups.

- DynamicAxisWarping.jl Is a Julia implementation of DTW and related algorithms such as FastDTW, SoftDTW, GeneralDTW and DTW barycenters.

Applications

Spoken-word recognition

Due to different speaking rates, a non-linear fluctuation occurs in speech pattern versus time axis, which needs to be eliminated.[24] DP matching is a pattern-matching algorithm based on dynamic programming (DP), which uses a time-normalization effect, where the fluctuations in the time axis are modeled using a non-linear time-warping function. Considering any two speech patterns, we can get rid of their timing differences by warping the time axis of one so that the maximal coincidence is attained with the other. Moreover, if the warping function is allowed to take any possible value, very less distinction can be made between words belonging to different categories. So, to enhance the distinction between words belonging to different categories, restrictions were imposed on the warping function slope.

Correlation power analysis

Unstable clocks are used to defeat naive power analysis. Several techniques are used to counter this defense, one of which is dynamic time warping.

See also

References

- Olsen, NL; Markussen, B; Raket, LL (2018), "Simultaneous inference for misaligned multivariate functional data", Journal of the Royal Statistical Society Series C, 67 (5): 1147–76, doi:10.1111/rssc.12276

- Gold, Omer; Sharir, Micha (2018). "Dynamic Time Warping and Geometric Edit Distance: Breaking the Quadratic Barrier". Association for Computing Machinery. 14 (4). doi:10.1145/3230734.

- Silva, D. F., Batista, G. E. A. P. A. (2015). Speeding Up All-Pairwise Dynamic Time Warping Matrix Calculation.

- Al-Naymat, G., Chawla, S., Taheri, J. (2012). SparseDTW: A Novel Approach to Speed up Dynamic Time Warping.

- Stan Salvador, Philip Chan, FastDTW: Toward Accurate Dynamic Time Warping in Linear Time and Space. KDD Workshop on Mining Temporal and Sequential Data, pp. 70–80, 2004.

- Meinard Müller, Henning Mattes, and Frank Kurth (2006). An Efficient Multiscale Approach to Audio Synchronization. Proceedings of the International Conference on Music Information Retrieval (ISMIR), pp. 192—197.

- Thomas Prätzlich, Jonathan Driedger, and Meinard Müller (2016). Memory-Restricted Multiscale Dynamic Time Warping. Proceedings of the IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), pp. 569—573.

- Keogh, E.; Ratanamahatana, C. A. (2005). "Exact indexing of dynamic time warping". Knowledge and Information Systems. 7 (3): 358–386. doi:10.1007/s10115-004-0154-9.

- Lemire, D. (2009). "Faster Retrieval with a Two-Pass Dynamic-Time-Warping Lower Bound". Pattern Recognition. 42 (9): 2169–2180. arXiv:0811.3301. doi:10.1016/j.patcog.2008.11.030.

- Wang, Xiaoyue; et al. "Experimental comparison of representation methods and distance measures for time series data". Data Mining and Knowledge Discovery. 2010: 1–35.

- Gupta, L.; Molfese, D. L.; Tammana, R.; Simos, P. G. (1996). "Nonlinear alignment and averaging for estimating the evoked potential". IEEE Transactions on Biomedical Engineering. 43 (4): 348–356. doi:10.1109/10.486255. PMID 8626184.

- Petitjean, F. O.; Ketterlin, A.; Gançarski, P. (2011). "A global averaging method for dynamic time warping, with applications to clustering". Pattern Recognition. 44 (3): 678. doi:10.1016/j.patcog.2010.09.013.

- Petitjean, F. O.; Gançarski, P. (2012). "Summarizing a set of time series by averaging: From Steiner sequence to compact multiple alignment". Theoretical Computer Science. 414: 76–91. doi:10.1016/j.tcs.2011.09.029.

- Ding, Hui; Trajcevski, Goce; Scheuermann, Peter; Wang, Xiaoyue; Keogh, Eamonn (2008). "Querying and mining of time series data: experimental comparison of representations and distance measures". Proc. VLDB Endow. 1 (2): 1542–1552. doi:10.14778/1454159.1454226.

- Lucero, J. C.; Munhall, K. G.; Gracco, V. G.; Ramsay, J. O. (1997). "On the Registration of Time and the Patterning of Speech Movements". Journal of Speech, Language, and Hearing Research. 40 (5): 1111–1117. doi:10.1044/jslhr.4005.1111.

- Durrleman, S; Pennec, X.; Trouvé, A.; Braga, J.; Gerig, G. & Ayache, N. (2013). "Toward a Comprehensive Framework for the Spatiotemporal Statistical Analysis of Longitudinal Shape Data". International Journal of Computer Vision. 103: 22–59. doi:10.1007/s11263-012-0592-x. PMC 3744347.

- Howell, P.; Anderson, A.; Lucero, J. C. (2010). "Speech motor timing and fluency". In Maassen, B.; van Lieshout, P. (eds.). Speech Motor Control: New Developments in Basic and Applied Research. Oxford University Press. pp. 215–225. ISBN 978-0199235797.

- Koenig, Laura L.; Lucero, Jorge C.; Perlman, Elizabeth (2008). "Speech production variability in fricatives of children and adults: Results of functional data analysis". The Journal of the Acoustical Society of America. 124 (5): 3158–3170. Bibcode:2008ASAJ..124.3158K. doi:10.1121/1.2981639. ISSN 0001-4966. PMC 2677351. PMID 19045800.

- Nakagawa, Seiichi; Nakanishi, Hirobumi (1988-01-01). "Speaker-Independent English Consonant and Japanese Word Recognition by a Stochastic Dynamic Time Warping Method". IETE Journal of Research. 34 (1): 87–95. doi:10.1080/03772063.1988.11436710. ISSN 0377-2063.

- Fang, Chunsheng. "From Dynamic Time Warping (DTW) to Hidden Markov Model (HMM)" (PDF).

- Juang, B. H. (September 1984). "On the hidden Markov model and dynamic time warping for speech recognition #x2014; A unified view". AT&T Bell Laboratories Technical Journal. 63 (7): 1213–1243. doi:10.1002/j.1538-7305.1984.tb00034.x. ISSN 0748-612X.

- Raket LL, Sommer S, Markussen B (2014). "A nonlinear mixed-effects model for simultaneous smoothing and registration of functional data". Pattern Recognition Letters. 38: 1–7. doi:10.1016/j.patrec.2013.10.018.

- Raket LL, Grimme B, Schöner G, Igel C, Markussen B (2016). "Separating timing, movement conditions and individual differences in the analysis of human movement". PLoS Computational Biology. 12 (9): e1005092. doi:10.1371/journal.pcbi.1005092.

- Sakoe, Hiroaki; Chiba, Seibi (1978). "Dynamic programming algorithm optimization for spoken word recognition". IEEE Transactions on Acoustics, Speech, and Signal Processing. 26 (1): 43–49. doi:10.1109/tassp.1978.1163055.

Further reading

- Vintsyuk, T. K. (1968). "Speech discrimination by dynamic programming". Kibernetika. 4: 81–88.

- Sakoe, H.; Chiba (1978). "Dynamic programming algorithm optimization for spoken word recognition". IEEE Transactions on Acoustics, Speech, and Signal Processing. 26 (1): 43–49. doi:10.1109/tassp.1978.1163055.

- Myers, C. S.; Rabiner, L. R. (1981). "A Comparative Study of Several Dynamic Time-Warping Algorithms for Connected-Word Recognition". Bell System Technical Journal. 60 (7): 1389–1409. doi:10.1002/j.1538-7305.1981.tb00272.x. ISSN 0005-8580.

- Rabiner, Lawrence; Juang, Biing-Hwang (1993). "Chapter 4: Pattern-Comparison Techniques". Fundamentals of speech recognition. Englewood Cliffs, N.J.: PTR Prentice Hall. ISBN 978-0-13-015157-5.CS1 maint: ref=harv (link)

- Müller, Meinard (2007). Dynamic Time Warping. In Information Retrieval for Music and Motion, chapter 4, pages 69-84 (PDF). Springer. doi:10.1007/978-3-540-74048-3. ISBN 978-3-540-74047-6.

- Rakthanmanon, Thanawin (September 2013). "Addressing Big Data Time Series: Mining Trillions of Time Series Subsequences Under Dynamic Time Warping". ACM Transactions on Knowledge Discovery from Data. 7 (3): 10:1–10:31. doi:10.1145/2513092.2500489.