There is no objective, systematic and consistent notion of what is a "round". Each algorithm specification defines things its own way.

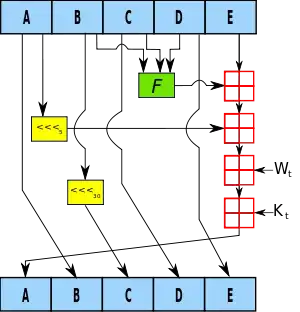

MD5 is described as first padding and then splitting its input into 512-bit blocks. Then, as the RFC puts it, there are four rounds where each round happens to be a sequence of 16 very similar operations. So we could say that MD5 uses "four rounds" (per block). However, cryptographers have soon shunned this terminology, and when they talk about the potential weaknesses of MD5, they concentrate on the internal operations, which they call "rounds". In that view, MD5 has 64 rounds.

SHA-512 is specified similarly as padding and breaking input data into 1024-bit blocks, and each block is processed with a loop which repeats 80 times a sequence of 4 steps. So we could say that SHA-512 uses "320 steps" (per block). There again, cryptographers agree, more or less implicitly, on talking about "80 rounds". It can be noticed that each of these rounds roughly implies twice as many operations as an MD5 "round" (when MD5 is said to have 64 rounds) so it could be argued that SHA-512 actually has 160 rounds. Or not.

These terminology issues are not important as long as everybody agrees about what they are talking about. The important point is that there is no absolute notion of "round" which is more correct than any other, or which could allow comparing different algorithms based on "how many rounds they have".

As for knowing what happens within the rounds, the simplest way to know is to read the specification, and implement it in some language. This is not hard (begin with MD5) and it is a great pedagogical experiment.