It is as if the CMD has no effect.

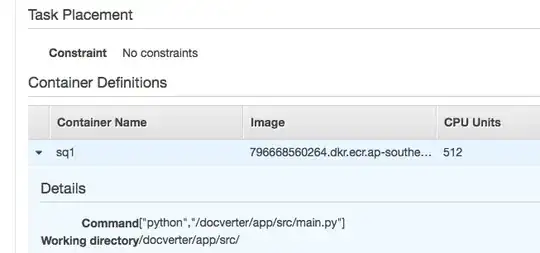

In my ECS task definition, I have defined the command as seen in the below screenshot

The python process is supposed to be a 'blocking process' - it supposes to wait on data in a SQS queue.

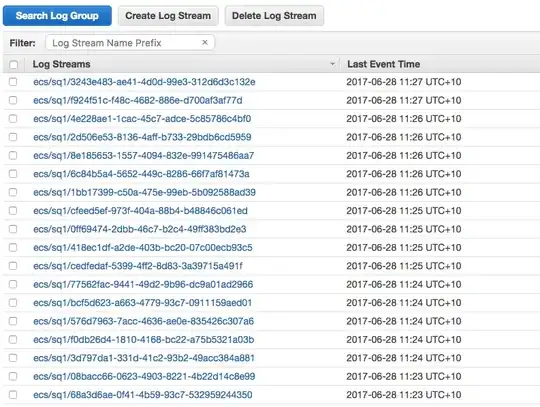

However from the cloud watch log, it seems like the task gets spawned continuously

In effect it is like executing this

docker run -t simplequeue python /docverter/app/src/main.py

The container is started and then terminated right away.

I have defined the ENTRYPOINT in my dockerfile

FROM ubuntu:16.04

RUN apt-get update -y --fix-missing

RUN apt-get install -y git python python-pip cron ntp

ENV APP_HOME /docverter

RUN mkdir -p ${APP_HOME}

COPY . ${APP_HOME}

#

# Log configuration

RUN mkdir -p /root/.aws

RUN mkdir -p /var/awslogs/state

COPY ./credentials /root/.aws/credentials

COPY docker-entrypoint.sh /docker-entrypoint.sh

RUN apt-get install -y curl

RUN curl https://s3.amazonaws.com/aws-cloudwatch/downloads/latest/awslogs-agent-setup.py -O

RUN python awslogs-agent-setup.py --non-interactive -c ${APP_HOME}/aws-log.cfg --region ap-southeast-2

# Entry point

# To ensure some background services are started when the container is started

ENTRYPOINT /docker-entrypoint.sh

And the docker-entrypoint is as follow:

#!/bin/bash

set -eo pipefail

service awslogs start

service ntp start

I want to know how I can make the docker container to execute the Command in the task definition

EDIT as per Andrey's answer, I have modified the entrypoint script but it does not solve the issue. I added an extra echo "$@" for debug and it prints blank.

docker build -t broken .

docker run -it broken python # export to have python started

The test code can be cloned from here: git clone -b broken-entry-point-and-cmd https://github.com/kongakong/aws-ecs