We have several redis instances running on a server. There are also multiple web tier servers connecting to those instance that experience a stall at the same time.

We had packet captures going at the time, that identified that there wall a stall in both TX and RX traffic, as per the following wireshark IO graphs:

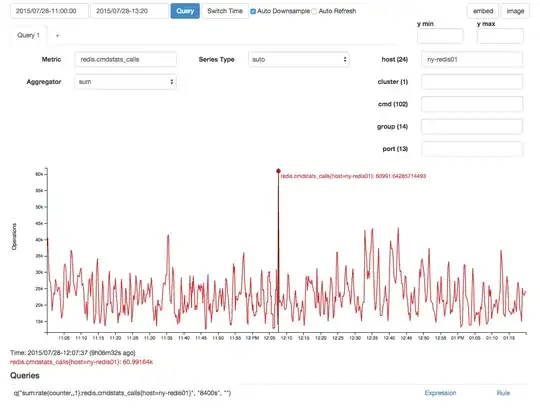

There was a correlating spike in redis calls, but I suspect that was an effect and not a cause due to the time lag:

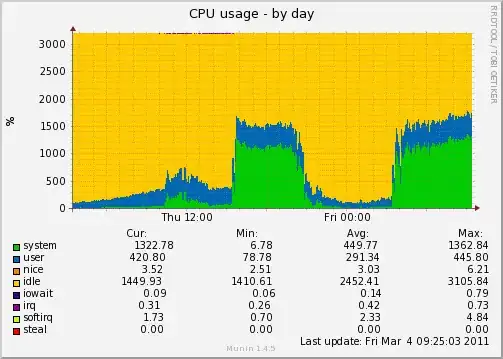

With a 15/s sampling interval (this is collected as a counter) there was an average of 136 memory allocation stalls:

There also seemed to be an out of the ordinary number of NUMA pages migrated at that same time:

Although the above looks normal, there were two consecutive data points for this which make it abnormal compared to other above 300 spikes seen in the graph.

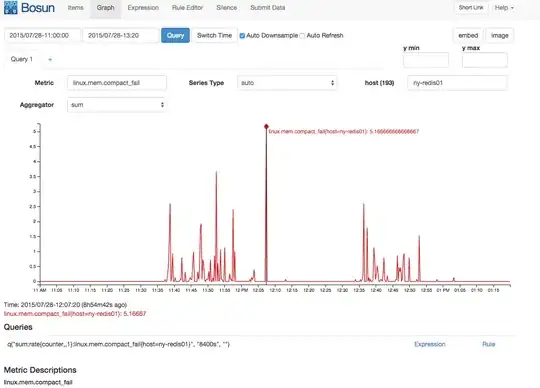

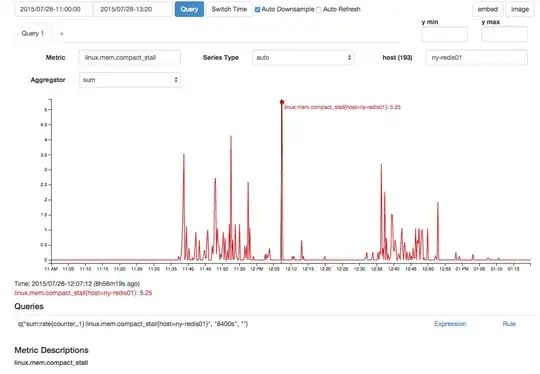

There was also correlated spike in memory compaction failures and compaction stalls:

Although I have a wealth of memory information here, my Linux memory knowledge is not deep enough to really hypothesize a good story that brings all this information together to explain the stall. Can anyone with deep Linux memory knowledge (and perhaps deep redis memory knowledge) tie some of this information together?

We collect all stats from /proc/vmstat at 15 second intervals, so if there is any data there that you think might add to this please do request it. I just picked the things that seemed to have interesting activity, in particular the alloc stall, the numa migration, and the compaction stall/failures. The totals follow, and cover 20 days of uptime:

[kbrandt@ny-redis01: ~] uptime

21:11:49 up 20 days, 20:05, 8 users, load average: 1.05, 0.74, 0.69

[kbrandt@ny-redis01: ~] cat /proc/vmstat

nr_free_pages 105382

nr_alloc_batch 5632

nr_inactive_anon 983455

nr_active_anon 15870487

nr_inactive_file 12904618

nr_active_file 2266184

nr_unevictable 0

nr_mlock 0

nr_anon_pages 16361259

nr_mapped 26329

nr_file_pages 15667318

nr_dirty 48588

nr_writeback 0

nr_slab_reclaimable 473720

nr_slab_unreclaimable 37147

nr_page_table_pages 38701

nr_kernel_stack 987

nr_unstable 0

nr_bounce 0

nr_vmscan_write 356302

nr_vmscan_immediate_reclaim 174305

nr_writeback_temp 0

nr_isolated_anon 0

nr_isolated_file 32

nr_shmem 423906

nr_dirtied 3071978326

nr_written 3069010459

numa_hit 1825289996

numa_miss 3360625955

numa_foreign 3360626253

numa_interleave 64798

numa_local 1856473774

numa_other 3329442177

workingset_refault 297175

workingset_activate 24923

workingset_nodereclaim 0

nr_anon_transparent_hugepages 41

nr_free_cma 0

nr_dirty_threshold 3030688

nr_dirty_background_threshold 1515344

pgpgin 25709012

pgpgout 12284206511

pswpin 143954

pswpout 341570

pgalloc_dma 430

pgalloc_dma32 498407404

pgalloc_normal 8131576449

pgalloc_movable 0

pgfree 8639210186

pgactivate 12022290

pgdeactivate 14512106

pgfault 61444049878

pgmajfault 23740

pgrefill_dma 0

pgrefill_dma32 1084722

pgrefill_normal 13419119

pgrefill_movable 0

pgsteal_kswapd_dma 0

pgsteal_kswapd_dma32 11991303

pgsteal_kswapd_normal 1051781383

pgsteal_kswapd_movable 0

pgsteal_direct_dma 0

pgsteal_direct_dma32 58737

pgsteal_direct_normal 36277968

pgsteal_direct_movable 0

pgscan_kswapd_dma 0

pgscan_kswapd_dma32 13416911

pgscan_kswapd_normal 1053143529

pgscan_kswapd_movable 0

pgscan_direct_dma 0

pgscan_direct_dma32 58926

pgscan_direct_normal 36291030

pgscan_direct_movable 0

pgscan_direct_throttle 0

zone_reclaim_failed 0

pginodesteal 0

slabs_scanned 1812992

kswapd_inodesteal 5096998

kswapd_low_wmark_hit_quickly 8600243

kswapd_high_wmark_hit_quickly 5068337

pageoutrun 14095945

allocstall 567491

pgrotated 971171

drop_pagecache 8

drop_slab 0

numa_pte_updates 58218081649

numa_huge_pte_updates 416664

numa_hint_faults 57988385456

numa_hint_faults_local 57286615202

numa_pages_migrated 39923112

pgmigrate_success 48662606

pgmigrate_fail 2670596

compact_migrate_scanned 29140124

compact_free_scanned 28320190101

compact_isolated 21473591

compact_stall 57784

compact_fail 37819

compact_success 19965

htlb_buddy_alloc_success 0

htlb_buddy_alloc_fail 0

unevictable_pgs_culled 5528

unevictable_pgs_scanned 0

unevictable_pgs_rescued 18567

unevictable_pgs_mlocked 20909

unevictable_pgs_munlocked 20909

unevictable_pgs_cleared 0

unevictable_pgs_stranded 0

thp_fault_alloc 11613

thp_fault_fallback 53

thp_collapse_alloc 3

thp_collapse_alloc_failed 0

thp_split 9804

thp_zero_page_alloc 1

thp_zero_page_alloc_failed 0

Also all /proc/sys/vm/* settings if that helps:

***/proc/sys/vm/admin_reserve_kbytes***

8192

***/proc/sys/vm/block_dump***

0

***/proc/sys/vm/dirty_background_bytes***

0

***/proc/sys/vm/dirty_background_ratio***

10

***/proc/sys/vm/dirty_bytes***

0

***/proc/sys/vm/dirty_expire_centisecs***

3000

***/proc/sys/vm/dirty_ratio***

20

***/proc/sys/vm/dirty_writeback_centisecs***

500

***/proc/sys/vm/drop_caches***

1

***/proc/sys/vm/extfrag_threshold***

500

***/proc/sys/vm/hugepages_treat_as_movable***

0

***/proc/sys/vm/hugetlb_shm_group***

0

***/proc/sys/vm/laptop_mode***

0

***/proc/sys/vm/legacy_va_layout***

0

***/proc/sys/vm/lowmem_reserve_ratio***

256 256 32

***/proc/sys/vm/max_map_count***

65530

***/proc/sys/vm/memory_failure_early_kill***

0

***/proc/sys/vm/memory_failure_recovery***

1

***/proc/sys/vm/min_free_kbytes***

90112

***/proc/sys/vm/min_slab_ratio***

5

***/proc/sys/vm/min_unmapped_ratio***

1

***/proc/sys/vm/mmap_min_addr***

4096

***/proc/sys/vm/nr_hugepages***

0

***/proc/sys/vm/nr_hugepages_mempolicy***

0

***/proc/sys/vm/nr_overcommit_hugepages***

0

***/proc/sys/vm/nr_pdflush_threads***

0

***/proc/sys/vm/numa_zonelist_order***

default

***/proc/sys/vm/oom_dump_tasks***

1

***/proc/sys/vm/oom_kill_allocating_task***

0

***/proc/sys/vm/overcommit_kbytes***

0

***/proc/sys/vm/overcommit_memory***

1

***/proc/sys/vm/overcommit_ratio***

50

***/proc/sys/vm/page-cluster***

3

***/proc/sys/vm/panic_on_oom***

0

***/proc/sys/vm/percpu_pagelist_fraction***

0

***/proc/sys/vm/scan_unevictable_pages***

0

***/proc/sys/vm/stat_interval***

1

***/proc/sys/vm/swappiness***

60

***/proc/sys/vm/user_reserve_kbytes***

131072

***/proc/sys/vm/vfs_cache_pressure***

100

***/proc/sys/vm/zone_reclaim_mode***

0

Update:

There is a thp_split that is close in time: