I started by creating 16 empty files of exactly 1 billion bytes:

for i in {1..16}; do dd if=/dev/zero of=/mnt/temp/block$i bs=1000000 count=1000 &> /dev/null; done

Then I created larger and larger RAIDZ2 volumes over the files, forcing ashift=12 to simulate a 4K sector drive, e.g.

zpool create tank raidz2 -o ashift=12 /mnt/temp/block1 /mnt/temp/block2...

and then compared using df -B1 to see the actual size.

Filesystem 1B-blocks

tank 12787777536

My results:

+-------+-------------+-------------+------------+------------+

| disks | expected | actual | overhead | efficiency |

+-------+-------------+-------------+------------+------------+

| 3 | 1000000000 | 951975936 | 48024064 | 95.2 |

| 4 | 2000000000 | 1883766784 | 116233216 | 94.2 |

| 5 | 3000000000 | 2892234752 | 107765248 | 96.4 |

| 6 | 4000000000 | 3892969472 | 107030528 | 97.3 |

| 7 | 5000000000 | 4530896896 | 469103104 | 90.6 |

| 8 | 6000000000 | 5541068800 | 458931200 | 92.4 |

| 9 | 7000000000 | 6691618816 | 308381184 | 95.6 |

| 10 | 8000000000 | 7446331392 | 553668608 | 93.1 |

| 11 | 9000000000 | 8201175040 | 798824960 | 91.1 |

| 12 | 10000000000 | 8905555968 | 1094444032 | 89.1 |

| 13 | 11000000000 | 10403577856 | 596422144 | 94.6 |

| 14 | 12000000000 | 11162222592 | 837777408 | 93.0 |

| 15 | 13000000000 | 12029263872 | 970736128 | 92.5 |

| 16 | 14000000000 | 12787908608 | 1212091392 | 91.3 |

+-------+-------------+-------------+------------+------------+

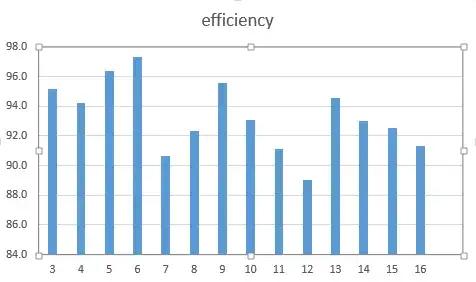

As a chart:

- Are my results correct, or have I left something out?

- If they're correct, why? Where is the space going?

- Can I do anything to improve efficiency?

- Is there a formula to calculate efficiency?