I have several TBs of very valuable personal data in a zpool which I can not access due to data corruption. The pool was originally set up back in 2009 or so on a FreeBSD 7.2 system running inside a VMWare virtual machine on top of a Ubuntu 8.04 system. The FreeBSD VM is still available and running fine, only the host OS has now changed to Debian 6. The hard drives are made accessible to the guest VM by means of VMWare generic SCSI devices, 12 in total.

There are 2 pools:

- zpool01: 2x 4x 500GB

- zpool02: 1x 4x 160GB

The one that works is empty, the broken one holds all the important data:

[user@host~]$ uname -a

FreeBSD host.domain 7.2-RELEASE FreeBSD 7.2-RELEASE #0: \

Fri May 1 07:18:07 UTC 2009 \

root@driscoll.cse.buffalo.edu:/usr/obj/usr/src/sys/GENERIC amd64

[user@host ~]$ dmesg | grep ZFS

WARNING: ZFS is considered to be an experimental feature in FreeBSD.

ZFS filesystem version 6

ZFS storage pool version 6

[user@host ~]$ sudo zpool status

pool: zpool01

state: UNAVAIL

scrub: none requested

config:

NAME STATE READ WRITE CKSUM

zpool01 UNAVAIL 0 0 0 insufficient replicas

raidz1 UNAVAIL 0 0 0 corrupted data

da5 ONLINE 0 0 0

da6 ONLINE 0 0 0

da7 ONLINE 0 0 0

da8 ONLINE 0 0 0

raidz1 ONLINE 0 0 0

da1 ONLINE 0 0 0

da2 ONLINE 0 0 0

da3 ONLINE 0 0 0

da4 ONLINE 0 0 0

pool: zpool02

state: ONLINE

scrub: none requested

config:

NAME STATE READ WRITE CKSUM

zpool02 ONLINE 0 0 0

raidz1 ONLINE 0 0 0

da9 ONLINE 0 0 0

da10 ONLINE 0 0 0

da11 ONLINE 0 0 0

da12 ONLINE 0 0 0

errors: No known data errors

I was able to access the pool a couple of weeks ago. Since then, I had to replace pretty much all of the hardware of the host machine and install several host operating systems.

My suspicion is that one of these OS installations wrote a bootloader (or whatever) to one (the first ?) of the 500GB drives and destroyed some zpool metadata (or whatever) - 'or whatever' meaning that this is just a very vague idea and that subject is not exactly my strong side...

There is plenty of websites, blogs, mailing lists, etc. about ZFS. I post this question here in the hope that it helps me gather enough information for a sane, structured, controlled, informed, knowledgeable approach to get my data back - and hopefully help someone else out there in the same situation.

The first search result when googling for 'zfs recover' is the ZFS Troubleshooting and Data Recovery chapter from Solaris ZFS Administration Guide. In the first ZFS Failure Modes section, it says in the 'Corrupted ZFS Data' paragraph:

Data corruption is always permanent and requires special consideration during repair. Even if the underlying devices are repaired or replaced, the original data is lost forever.

Somewhat disheartening.

However, the second google search result is Max Bruning's weblog and in there, I read

Recently, I was sent an email from someone who had 15 years of video and music stored in a 10TB ZFS pool that, after a power failure, became defective. He unfortunately did not have a backup. He was using ZFS version 6 on FreeBSD 7 [...] After spending about 1 week examining the data on the disk, I was able to restore basically all of it.

and

As for ZFS losing your data, I doubt it. I suspect your data is there, but you need to find the right way to get at it.

(that sounds so much more like something I wanna hear...)

First step: What exactly is the problem ?

How can I diagnose why exactly the zpool is reported as corrupted ? I see there is zdb which doesn't seem to be officially documented by Sun or Oracle anywhere on the web. From its man page:

NAME

zdb - ZFS debugger

SYNOPSIS

zdb pool

DESCRIPTION

The zdb command is used by support engineers to diagnose failures and

gather statistics. Since the ZFS file system is always consistent on

disk and is self-repairing, zdb should only be run under the direction

by a support engineer.

If no arguments are specified, zdb, performs basic consistency checks

on the pool and associated datasets, and report any problems detected.

Any options supported by this command are internal to Sun and subject

to change at any time.

Further, Ben Rockwood has posted a detailed article and there is a video of Max Bruning talking about it (and mdb) at the Open Solaris Developer Conference in Prague on June 28, 2008.

Running zdb as root on the broken zpool gives the following output:

[user@host ~]$ sudo zdb zpool01

version=6

name='zpool01'

state=0

txg=83216

pool_guid=16471197341102820829

hostid=3885370542

hostname='host.domain'

vdev_tree

type='root'

id=0

guid=16471197341102820829

children[0]

type='raidz'

id=0

guid=48739167677596410

nparity=1

metaslab_array=14

metaslab_shift=34

ashift=9

asize=2000412475392

children[0]

type='disk'

id=0

guid=4795262086800816238

path='/dev/da5'

whole_disk=0

DTL=202

children[1]

type='disk'

id=1

guid=16218262712375173260

path='/dev/da6'

whole_disk=0

DTL=201

children[2]

type='disk'

id=2

guid=15597847700365748450

path='/dev/da7'

whole_disk=0

DTL=200

children[3]

type='disk'

id=3

guid=9839399967725049819

path='/dev/da8'

whole_disk=0

DTL=199

children[1]

type='raidz'

id=1

guid=8910308849729789724

nparity=1

metaslab_array=119

metaslab_shift=34

ashift=9

asize=2000412475392

children[0]

type='disk'

id=0

guid=5438331695267373463

path='/dev/da1'

whole_disk=0

DTL=198

children[1]

type='disk'

id=1

guid=2722163893739409369

path='/dev/da2'

whole_disk=0

DTL=197

children[2]

type='disk'

id=2

guid=11729319950433483953

path='/dev/da3'

whole_disk=0

DTL=196

children[3]

type='disk'

id=3

guid=7885201945644860203

path='/dev/da4'

whole_disk=0

DTL=195

zdb: can't open zpool01: Invalid argument

I suppose the 'invalid argument' error at the end occurs because the zpool01 does not actually exist: It doesn't occur on the working zpool02, but there doesn't seem to be any further output either...

OK, at this stage, it is probably better to post this before the article gets too long.

Maybe someone can give me some advice on how to move forward from here and while I'm waiting for a response, I'll watch the video, go through the details of the zdb output above, read Bens article and try to figure out what's what...

20110806-1600+1000

Update 01:

I think I have found the root cause: Max Bruning was kind enough to respond to an email of mine very quickly, asking for the output of zdb -lll. On any of the 4 hard drives in the 'good' raidz1 half of the pool, the output is similar to what I posted above. However, on the first 3 of the 4 drives in the 'broken' half, zdb reports failed to unpack label for label 2 and 3. The fourth drive in the pool seems OK, zdb shows all labels.

Googling that error message brings up this post. From the first response to that post:

With ZFS, that are 4 identical labels on each physical vdev, in this case a single hard drive. L0/L1 at the start of the vdev, and L2/L3 at the end of the vdev.

All 8 drives in the pool are of the same model, Seagate Barracuda 500GB. However, I do remember I started the pool with 4 drives, then one of them died and was replaced under warranty by Seagate. Later on, I added another 4 drives. For that reason, the drive and firmware identifiers are different:

[user@host ~]$ dmesg | egrep '^da.*?: <'

da0: <VMware, VMware Virtual S 1.0> Fixed Direct Access SCSI-2 device

da1: <ATA ST3500418AS CC37> Fixed Direct Access SCSI-5 device

da2: <ATA ST3500418AS CC37> Fixed Direct Access SCSI-5 device

da3: <ATA ST3500418AS CC37> Fixed Direct Access SCSI-5 device

da4: <ATA ST3500418AS CC37> Fixed Direct Access SCSI-5 device

da5: <ATA ST3500320AS SD15> Fixed Direct Access SCSI-5 device

da6: <ATA ST3500320AS SD15> Fixed Direct Access SCSI-5 device

da7: <ATA ST3500320AS SD15> Fixed Direct Access SCSI-5 device

da8: <ATA ST3500418AS CC35> Fixed Direct Access SCSI-5 device

da9: <ATA SAMSUNG HM160JC AP10> Fixed Direct Access SCSI-5 device

da10: <ATA SAMSUNG HM160JC AP10> Fixed Direct Access SCSI-5 device

da11: <ATA SAMSUNG HM160JC AP10> Fixed Direct Access SCSI-5 device

da12: <ATA SAMSUNG HM160JC AP10> Fixed Direct Access SCSI-5 device

I do remember though that all drives had the same size. Looking at the drives now, it shows that the size has changed for three of them, they have shrunk by 2 MB:

[user@host ~]$ dmesg | egrep '^da.*?: .*?MB '

da0: 10240MB (20971520 512 byte sectors: 255H 63S/T 1305C)

da1: 476940MB (976773168 512 byte sectors: 255H 63S/T 60801C)

da2: 476940MB (976773168 512 byte sectors: 255H 63S/T 60801C)

da3: 476940MB (976773168 512 byte sectors: 255H 63S/T 60801C)

da4: 476940MB (976773168 512 byte sectors: 255H 63S/T 60801C)

da5: 476938MB (976771055 512 byte sectors: 255H 63S/T 60801C) <--

da6: 476938MB (976771055 512 byte sectors: 255H 63S/T 60801C) <--

da7: 476938MB (976771055 512 byte sectors: 255H 63S/T 60801C) <--

da8: 476940MB (976773168 512 byte sectors: 255H 63S/T 60801C)

da9: 152627MB (312581808 512 byte sectors: 255H 63S/T 19457C)

da10: 152627MB (312581808 512 byte sectors: 255H 63S/T 19457C)

da11: 152627MB (312581808 512 byte sectors: 255H 63S/T 19457C)

da12: 152627MB (312581808 512 byte sectors: 255H 63S/T 19457C)

So by the looks of it, it was not one of the OS installations that 'wrote a bootloader to one the drives' (as I had assumed before), it was actually the new motherboard (an ASUS P8P67 LE) creating a 2 MB host protected area at the end of three of the drives which messed up my ZFS metadata.

Why did it not create an HPA on all drives ? I believe this is because the HPA creation is only done on older drives with a bug that was fixed later on by a Seagate hard drive BIOS update: When this entire incident began a couple of weeks ago, I ran Seagate's SeaTools to check if there is anything physically wrong with the drives (still on the old hardware) and I got a message telling me that some of my drives need a BIOS update. As I am now trying to reproduce the exact details of that message and the link to the firmware update download, it seems that since the motherboard created the HPA, both SeaTools DOS versions to fail to detect the harddrives in question - a quick invalid partition or something similar flashes by when they start, that's it. Ironically, they do find a set of Samsung drives, though.

(I've skipped on the painful, time-consuming and ultimately fruitless details of screwing around in a FreeDOS shell on a non-networked system.) In the end, I installed Windows 7 on a separate machine in order to run the SeaTools Windows version 1.2.0.5. Just a last remark about DOS SeaTools: Don't bother trying to boot them standalone - instead, invest a couple of minutes and make a bootable USB stick with the awesome Ultimate Boot CD - which apart from DOS SeaTools also gets you many many other really useful tools.

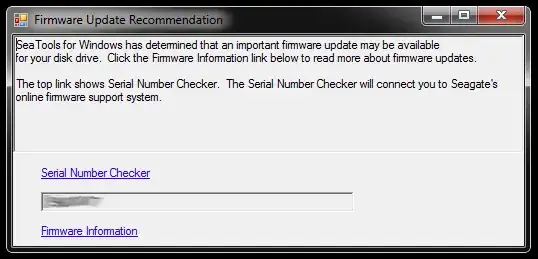

When started, SeaTools for Windows bring up this dialog:

The links lead to the Serial Number Checker (which for some reason is protected by a captcha - mine was 'Invasive users') and a knowledge base article about the firmware update. There's probably further links specific to the hard drive model and some downloads and what not, but I won't follow that path for the moment:

I won't rush into updating the firmware of three drives at a time that have truncated partitions and are part of a broken storage pool. That's asking for trouble. For starters, the firmware update most likely can not be undone - and that might irrevocably ruin my chances to get my data back.

Therefore, the very first thing I'm going to do next is image the drives and work with the copies, so there's an original to go back to if anything goes wrong. This might introduce an additional complexity, as ZFS will probably notice that drives were swapped (by means of the drive serial number or yet another UUID or whatever), even though it's bit-exact dd copies onto the same hard drive model. Moreover, the zpool is not even live. Boy, this might get tricky.

The other option however would be to work with the originals and keep the mirrored drives as backup, but then I'll probably run into above complexity when something went wrong with the originals. Naa, not good.

In order to clear out the three hard drives that will serve as imaged replacements for the three drives with the buggy BIOS in the broken pool, I need to create some storage space for the stuff that's on there now, so I'll dig deep in the hardware box and assemble a temporary zpool from some old drives - which I can also use to test how ZFS deals with swapping dd'd drives.

This might take a while...

20111213-1930+1100

Update 02:

This did take a while indeed. I've spent months with several open computer cases on my desk with various amounts of harddrive stacks hanging out and also slept a few nights with earplugs, because I could not shut down the machine before going to bed as it was running some lengthy critical operation. However, I prevailed at last! :-) I've also learned a lot in the process and I would like to share that knowledge here for anyone in a similar situation.

This article is already much longer than anyone with a ZFS file server out of action has the time to read, so I will go into details here and create an answer with the essential findings further below.

I dug deep in the obsolete hardware box to assemble enough storage space to move the stuff off the single 500GB drives to which the defective drives were mirrored. I also had to rip out a few hard drives out of their USB cases, so I could connect them over SATA directly. There was some more, unrelated issues involved and some of the old drives started to fail when I put them back into action requiring a zpool replace, but I'll skip on that.

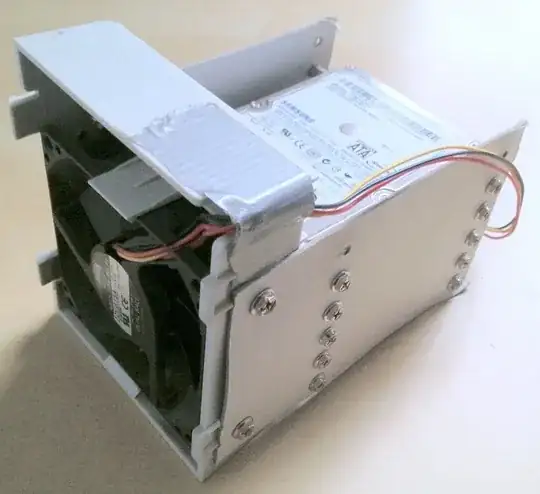

Tip: At some stage, there was a total of about 30 hard drives involved in this. With that much hardware, it is an enormous help to have them stacked properly; cables coming loose or hard drive falling off your desk surely won't help in the process and might cause further damage to your data integrity.

I spent a couple of minutes creating some make-shift cardboard hard drive fixtures which really helped to keep things sorted:

Ironically, when I connected the old drives the first time, I realized there's an old zpool on there I must have created for testing with an older version of some, but not all of the personal data that's gone missing, so while the data loss was somewhat reduced, this meant additional shifting back and forth of files.

Finally, I mirrored the problematic drives to backup drives, used those for the zpool and left the original ones disconnected. The backup drives have a newer firmware, at least SeaTools does not report any required firmware updates. I did the mirroring with a simple dd from one device to the other, e.g.

sudo dd if=/dev/sda of=/dev/sde

I believe ZFS does notice the hardware change (by some hard drive UUID or whatever), but doesn't seem to care.

The zpool however was still in the same state, insufficient replicas / corrupted data.

As mentioned in the HPA Wikipedia article mentioned earlier, the presence of a host protected area is reported when Linux boots and can be investigated using hdparm. As far as I know, there is no hdparm tool available on FreeBSD, but by this time, I anyway had FreeBSD 8.2 and Debian 6.0 installed as dual-boot system, so I booted into Linux:

user@host:~$ for i in {a..l}; do sudo hdparm -N /dev/sd$i; done

...

/dev/sdd:

max sectors = 976773168/976773168, HPA is disabled

/dev/sde:

max sectors = 976771055/976773168, HPA is enabled

/dev/sdf:

max sectors = 976771055/976773168, HPA is enabled

/dev/sdg:

max sectors = 976771055/976773168, HPA is enabled

/dev/sdh:

max sectors = 976773168/976773168, HPA is disabled

...

So the problem obviously was that the new motherboard created a HPA of a couple of megabytes at the end of the drive which 'hid' the upper two ZFS labels, i.e. prevented ZFS from seeing them.

Dabbling with the HPA seems a dangerous business. From the hdparm man page, parameter -N:

Get/set max visible number of sectors, also known as the Host Protected Area setting.

...

To change the current max (VERY DANGEROUS, DATA LOSS IS EXTREMELY LIKELY), a new value

should be provided (in base10) immediately following the -N option.

This value is specified as a count of sectors, rather than the "max sector address"

of the drive. Drives have the concept of a temporary (volatile) setting which is lost on

the next hardware reset, as well as a more permanent (non-volatile) value which survives

resets and power cycles. By default, -N affects only the temporary (volatile) setting.

To change the permanent (non-volatile) value, prepend a leading p character immediately

before the first digit of the value. Drives are supposed to allow only a single permanent

change per session. A hardware reset (or power cycle) is required before another

permanent -N operation can succeed.

...

In my case, the HPA is removed like this:

user@host:~$ sudo hdparm -Np976773168 /dev/sde

/dev/sde:

setting max visible sectors to 976773168 (permanent)

max sectors = 976773168/976773168, HPA is disabled

and in the same way for the other drives with an HPA. If you get the wrong drive or something about the size parameter you specify is not plausible, hdparm is smart enough to figure:

user@host:~$ sudo hdparm -Np976773168 /dev/sdx

/dev/sdx:

setting max visible sectors to 976773168 (permanent)

Use of -Nnnnnn is VERY DANGEROUS.

You have requested reducing the apparent size of the drive.

This is a BAD idea, and can easily destroy all of the drive's contents.

Please supply the --yes-i-know-what-i-am-doing flag if you really want this.

Program aborted.

After that, I restarted the FreeBSD 7.2 virtual machine on which the zpool had been originally created and zpool status reported a working pool again. YAY! :-)

I exported the pool on the virtual system and re-imported it on the host FreeBSD 8.2 system.

Some more major hardware upgrades, another motherboard swap, a ZFS pool update to ZFS 4 / 15, a thorough scrubbing and now my zpool consists of 8x1TB plus 8x500GB raidz2 parts:

[user@host ~]$ sudo zpool status

pool: zpool

state: ONLINE

scrub: none requested

config:

NAME STATE READ WRITE CKSUM

zpool ONLINE 0 0 0

raidz2 ONLINE 0 0 0

ad0 ONLINE 0 0 0

ad1 ONLINE 0 0 0

ad2 ONLINE 0 0 0

ad3 ONLINE 0 0 0

ad8 ONLINE 0 0 0

ad10 ONLINE 0 0 0

ad14 ONLINE 0 0 0

ad16 ONLINE 0 0 0

raidz2 ONLINE 0 0 0

da0 ONLINE 0 0 0

da1 ONLINE 0 0 0

da2 ONLINE 0 0 0

da3 ONLINE 0 0 0

da4 ONLINE 0 0 0

da5 ONLINE 0 0 0

da6 ONLINE 0 0 0

da7 ONLINE 0 0 0

errors: No known data errors

[user@host ~]$ df -h

Filesystem Size Used Avail Capacity Mounted on

/dev/label/root 29G 13G 14G 49% /

devfs 1.0K 1.0K 0B 100% /dev

zpool 8.0T 3.6T 4.5T 44% /mnt/zpool

As a last word, it seems to me ZFS pools are very, very hard to kill. The guys from Sun from who created that system have all the reason the call it the last word in filesystems. Respect!