Dependent and independent variables

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or hypothesis that they depend, by some law or rule (e.g., by a mathematical function), on the values of other variables. Independent variables, in turn, are not seen as depending on any other variable in the scope of the experiment in question; thus, even if the existing dependency is invertible (e.g., by finding the inverse function when it exists), the nomenclature is kept if the inverse dependency is not the object of study in the experiment. In this sense, some common independent variables are time, space, density, mass, fluid flow rate[1][2], and previous values of some observed value of interest (e.g. human population size) to predict future values (the dependent variable)[3].

Of the two, it is always the dependent variable whose variation is being studied, by altering inputs, also known as regressors in a statistical context. In an experiment, any variable that the experimenter manipulates can be called an independent variable. Models and experiments test the effects that the independent variables have on the dependent variables. Sometimes, even if their influence is not of direct interest, independent variables may be included for other reasons, such as to account for their potential confounding effect.

Mathematics

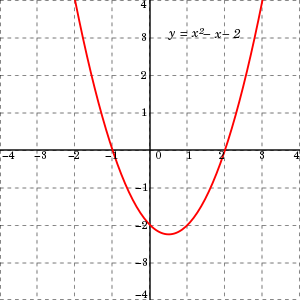

In mathematics, a function is a rule for taking an input (in the simplest case, a number or set of numbers)[5] and providing an output (which may also be a number).[5] A symbol that stands for an arbitrary input is called an independent variable, while a symbol that stands for an arbitrary output is called a dependent variable.[6] The most common symbol for the input is x, and the most common symbol for the output is y; the function itself is commonly written .[6][7]

It is possible to have multiple independent variables or multiple dependent variables. For instance, in multivariable calculus, one often encounters functions of the form , where z is a dependent variable and x and y are independent variables.[8] Functions with multiple outputs are often referred to as vector-valued functions.

Statistics

In an experiment, the variable manipulated by an experimenter is called an independent variable.[9] The dependent variable is the event expected to change when the independent variable is manipulated.[10]

In data mining tools (for multivariate statistics and machine learning), the dependent variable is assigned a role as target variable (or in some tools as label attribute), while an independent variable may be assigned a role as regular variable.[11] Known values for the target variable are provided for the training data set and test data set, but should be predicted for other data. The target variable is used in supervised learning algorithms but not in unsupervised learning.

Modeling

In mathematical modeling, the dependent variable is studied to see if and how much it varies as the independent variables vary. In the simple stochastic linear model the term is the i th value of the dependent variable and is the i th value of the independent variable. The term is known as the "error" and contains the variability of the dependent variable not explained by the independent variable.

With multiple independent variables, the model is , where n is the number of independent variables.

The linear regression model is now discussed. To use linear regression, a scatter plot of data is generated with X as the independent variable and Y as the dependent variable. This is also called a bivariate dataset, . The simple linear regression model takes the form , for . In this case, are independent random variables. This occurs when the measurements do not influence each other. Through propagation of independence, the independence of implies independence of , even though each has a different expectation value. Each has an expectation value of 0 and a variance of .[12]

Expectation of Proof:

The line of best fit for the bivariate dataset takes the form and is called the regression line. and correspond to the intercept and slope, respectively. [14]

Simulation

In simulation, the dependent variable is changed in response to changes in the independent variables.

Statistics synonyms

Depending on the context, an independent variable is sometimes called a "predictor variable", regressor, covariate, "manipulated variable", "explanatory variable", exposure variable (see reliability theory), "risk factor" (see medical statistics), "feature" (in machine learning and pattern recognition) or "input variable".[15][16] In econometrics, the term "control variable" is usually used instead of "covariate".[17][18][19][20][21]

From the Economics community, the independent variables are also called exogenous.

Depending on the context, a dependent variable is sometimes called a "response variable", "regressand", "criterion", "predicted variable", "measured variable", "explained variable", "experimental variable", "responding variable", "outcome variable", "output variable", "target" or "label".[16]. In economics endogenous variables are usually referencing the target.

"Explanatory variable" is preferred by some authors over "independent variable" when the quantities treated as independent variables may not be statistically independent or independently manipulable by the researcher.[22][23] If the independent variable is referred to as an "explanatory variable" then the term "response variable" is preferred by some authors for the dependent variable.[16][22][23]

"Explained variable" is preferred by some authors over "dependent variable" when the quantities treated as "dependent variables" may not be statistically dependent.[24] If the dependent variable is referred to as an "explained variable" then the term "predictor variable" is preferred by some authors for the independent variable.[24]

Variables may also be referred to by their form: continuous or categorical, which in turn may be binary/dichotomous, nominal categorical, and ordinal categorical, among others.

An example is provided by the analysis of trend in sea level by Woodworth (1987). Here the dependent variable (and variable of most interest) was the annual mean sea level at a given location for which a series of yearly values were available. The primary independent variable was time. Use was made of a covariate consisting of yearly values of annual mean atmospheric pressure at sea level. The results showed that inclusion of the covariate allowed improved estimates of the trend against time to be obtained, compared to analyses which omitted the covariate.

Other variables

A variable may be thought to alter the dependent or independent variables, but may not actually be the focus of the experiment. So that the variable will be kept constant or monitored to try to minimize its effect on the experiment. Such variables may be designated as either a "controlled variable", "control variable", or "fixed variable".

Extraneous variables, if included in a regression analysis as independent variables, may aid a researcher with accurate response parameter estimation, prediction, and goodness of fit, but are not of substantive interest to the hypothesis under examination. For example, in a study examining the effect of post-secondary education on lifetime earnings, some extraneous variables might be gender, ethnicity, social class, genetics, intelligence, age, and so forth. A variable is extraneous only when it can be assumed (or shown) to influence the dependent variable. If included in a regression, it can improve the fit of the model. If it is excluded from the regression and if it has a non-zero covariance with one or more of the independent variables of interest, its omission will bias the regression's result for the effect of that independent variable of interest. This effect is called confounding or omitted variable bias; in these situations, design changes and/or controlling for a variable statistical control is necessary.

Extraneous variables are often classified into three types:

- Subject variables, which are the characteristics of the individuals being studied that might affect their actions. These variables include age, gender, health status, mood, background, etc.

- Blocking variables or experimental variables are characteristics of the persons conducting the experiment which might influence how a person behaves. Gender, the presence of racial discrimination, language, or other factors may qualify as such variables.

- Situational variables are features of the environment in which the study or research was conducted, which have a bearing on the outcome of the experiment in a negative way. Included are the air temperature, level of activity, lighting, and the time of day.

In modelling, variability that is not covered by the independent variable is designated by and is known as the "residual", "side effect", "error", "unexplained share", "residual variable", "disturbance", or "tolerance".

Examples

- Effect of fertilizer on plant growths:

- In a study measuring the influence of different quantities of fertilizer on plant growth, the independent variable would be the amount of fertilizer used. The dependent variable would be the growth in height or mass of the plant. The controlled variables would be the type of plant, the type of fertilizer, the amount of sunlight the plant gets, the size of the pots, etc.

- Effect of drug dosage on symptom severity:

- In a study of how different doses of a drug affect the severity of symptoms, a researcher could compare the frequency and intensity of symptoms when different doses are administered. Here the independent variable is the dose and the dependent variable is the frequency/intensity of symptoms.

- Effect of temperature on pigmentation:

- In measuring the amount of color removed from beetroot samples at different temperatures, temperature is the independent variable and amount of pigment removed is the dependent variable.

- Effect of sugar added in a coffee:

- The taste varies with the amount of sugar added in the coffee. Here, the sugar is the independent variable, while the taste is the dependent variable.

See also

References

- Aris, Rutherford (1994). Mathematical modelling techniques. Courier Corporation.

- Boyce, William E.; Richard C. DiPrima (2012). Elementary differential equations. John Wiley & Sons.

- Alligood, Kathleen T.; Sauer, Tim D.; Yorke, James A. (1996). Chaos an introduction to dynamical systems. Springer New York.

- Hastings, Nancy Baxter. Workshop calculus: guided exploration with review. Vol. 2. Springer Science & Business Media, 1998. p. 31

- Carlson, Robert. A concrete introduction to real analysis. CRC Press, 2006. p.183

- Stewart, James. Calculus. Cengage Learning, 2011. Section 1.1

- Anton, Howard, Irl C. Bivens, and Stephen Davis. Calculus Single Variable. John Wiley & Sons, 2012. Section 0.1

- Larson, Ron, and Bruce Edwards. Calculus. Cengage Learning, 2009. Section 13.1

- http://onlinestatbook.com/2/introduction/variables.html

- Random House Webster's Unabridged Dictionary. Random House, Inc. 2001. Page 534, 971. ISBN 0-375-42566-7.

- English Manual version 1.0 Archived 2014-02-10 at the Wayback Machine for RapidMiner 5.0, October 2013.

- Dekking, F.M. (Frederik Michel), 1946- (2005). A modern introduction to probability and statistics : understanding why and how. Springer. ISBN 1-85233-896-2. OCLC 783259968.CS1 maint: multiple names: authors list (link)

- Dekking, F.M. (Frederik Michel), 1946- (2005). A modern introduction to probability and statistics : understanding why and how. Springer. ISBN 1-85233-896-2. OCLC 783259968.CS1 maint: multiple names: authors list (link)

- Dekking, F.M. (Frederik Michel), 1946- (2005). A modern introduction to probability and statistics : understanding why and how. Springer. ISBN 1-85233-896-2. OCLC 783259968.CS1 maint: multiple names: authors list (link)

- Dodge, Y. (2003) The Oxford Dictionary of Statistical Terms, OUP. ISBN 0-19-920613-9 (entry for "independent variable")

- Dodge, Y. (2003) The Oxford Dictionary of Statistical Terms, OUP. ISBN 0-19-920613-9 (entry for "regression")

- Gujarati, Damodar N.; Porter, Dawn C. (2009). "Terminology and Notation". Basic Econometrics (Fifth international ed.). New York: McGraw-Hill. p. 21. ISBN 978-007-127625-2.

- Wooldridge, Jeffrey (2012). Introductory Econometrics: A Modern Approach (Fifth ed.). Mason, OH: South-Western Cengage Learning. pp. 22–23. ISBN 978-1-111-53104-1.

- Last, John M., ed. (2001). A Dictionary of Epidemiology (Fourth ed.). Oxford UP. ISBN 0-19-514168-7.

- Everitt, B. S. (2002). The Cambridge Dictionary of Statistics (2nd ed.). Cambridge UP. ISBN 0-521-81099-X.

- Woodworth, P. L. (1987). "Trends in U.K. mean sea level". Marine Geodesy. 11 (1): 57–87. doi:10.1080/15210608709379549.CS1 maint: ref=harv (link)

- Everitt, B.S. (2002) Cambridge Dictionary of Statistics, CUP. ISBN 0-521-81099-X

- Dodge, Y. (2003) The Oxford Dictionary of Statistical Terms, OUP. ISBN 0-19-920613-9

- Ash Narayan Sah (2009) Data Analysis Using Microsoft Excel, New Delhi. ISBN 978-81-7446-716-4

| Wikiversity has learning resources about Independent variable |

| Wikiversity has learning resources about Dependent variable |