IMO "sort of". Its definitely better and sharper, but native 4k content is pretty rare.

I'm looking at a 1080p and a 2180p display right now. I do do some sort of upsampling in my video player for 720p video and it looks fine. (Would screenshots be of help here? I dunno).

There's a lot of disagreement over whether 4K is worth it. Wirecutter doesn't explicitly suggest 4K displays 'cause they feel there's little benefit.

That said, I've worked in the vfx industry and no one worked in 4K. I can't remember what our 'standard' displays were but they're pretty crap. We used fairly standard Dells, typically older ones that were lying around. Good monitors would be 1080p or 1440p color calibrated. There's more concern over color calibration, and general real estate, and most work's done on 2K anyway. I'm not going to suggest exact models since I don't know whether my NDA at my last job covers that or not. Least as of this year, it doesn't feel like it's an essential tool for a professional visual effects or video rendering artist.

Depending on the sort of work you're doing, it's going to range from 'it doesn't matter' (you don't need 4k for modelling, or even texturing) to 'Just get a TV to see what it looks like' (if you want to see how 4k looks like, and most TVs have lower pixel density at 4K, since they are bigger) to "OH MY GOD IT IS AWESOME" (If you're a coder, or want a good general purpose high resolution display).

That said, most native 4K content is gloriously lush in many cases, but that might be cause they're made using really good cameras, and meant to showcase 4K. I've not managed to get my paws on a movie or TV show that's native 4k. I'm eagerly looking forward to netflix coming here for science.

The next question is "can I tell the difference?"

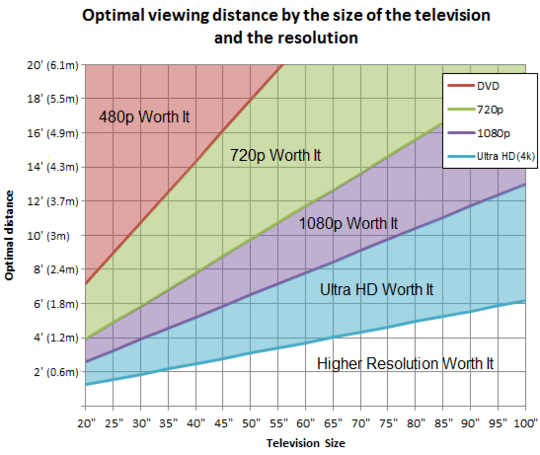

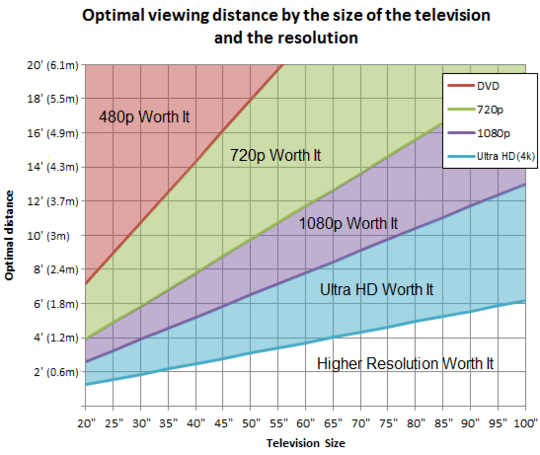

It depends on your distance from the screen. rtings has a great interactive site for working out these things. That said, they have a handy visual chart

And well, if you're sitting between... I'm estimating one and a quarter meters, and 2 and a quarter meters, UHD may make sense at 24 inches.

Puget systems actually does the math, and considers things like visual acuity if you like more science. TLDR/THDM (Too hard didn't math) - Yes . They also have a lovely spreadsheet on google docs if you don't want to do the math

Now, there's a few other factors to consider.

My 27 inch UHD screen beats the pants off the 24 inch 1080p screen I have in every respect.Pixel density (which on a 27" UHD display is 163 ppi) is essentially good enough that you can't 'see' the pixels. Compared to a 24 inch (admittedly inferior, TN) screen side by side, the pixels on a 24 inch are visible compared to the 27 incher, with the 27 inch display at 1.25x ui scaling, and the 24 inch at 100%/native.

Its even better in terms of pixel density than the legendary T220p, which essentially was designed to have a resolution like print media

However that's not the only reason its better, and not the only thing to look at. My screen is IPS, which is a significantly better technology. Its colour calibrated at the factory.

If it's in your budget, and you're willing to spend the extra scratch for a machine that can do what you want in 4K, it's definitely worth it. That said, when you can get a reasonably good setup for a few thousand less, it depends. I don't think unless you are working at 4K, its really going to be something that would help rendering though. Were I designing a setup for a 3d artist, (and building around a fairly powerful workstation), I might pair this up with a good (or mediocre) 2k display and get the best of both worlds, since you can review work at both resolutions

We're also at the point where 4K isn't common enough to chop too many features on mainstream monitors, the useful features (SST, mainstream outputs) are common on current displays and with some decent comparison shopping, you could probably buy one with a great feature set for not too much money. Jeff Atwood has three, and the excellent p2714q that I have is a hair under 500USD.

That said. The real question is "should I buy one?"

My usual answer would be not yet. The content isn't there, video cards struggle with rendering 4K video (I have a 980ti, and it tends to redline with most games, and the FPS tends to be decent, not spectacular at 4K). In your case though, it may make sense, especially if you intend to work with 4K, or code a fair bit. The extra real-estate, and sharpness is awesome enough that I'm looking at a second 4K display when I can afford it.

3Can't be answered, as it depends on your eyesight and how far from the screen you are sitting. – Ben Voigt – 2015-10-23T13:48:51.747

My eye sight is 100%. sitting from the screen on about half a metre – Igor Tatarnikov – 2015-10-23T16:18:55.160

1I agree it cannot really be answered well, but: 27 inch diagonal is going to be approx 25 inches wide. So for a 2560px wide (2k) monitor, each pixel must be approx 250 microns 1 inch / (2560/25) = ~.01 = ~250 microns. In ideal conditions, an adult can discern about .5 arc seconds. at arms length (3 feet), that is about 3 microns. So I'd expect that an individual pixel could be resolved. – Yorik – 2015-10-23T16:20:59.967

@Yorik: is 2K considered 1920 or 2560? (I based my answer on 1920.) – fixer1234 – 2015-10-23T16:24:53.053

2I only did a quick search, some consider 1920 as 2k, do some don't. My example would be about 100ppi. My rough calculations would not be particularly different from yours in any way that really matters. – Yorik – 2015-10-23T16:27:13.570

3There is more to it than just 4k vs 2k by the way. If you are doing video production, filming in 4k may be superior to 2k in that at certain distances, screen doors, brick walls, any repeating regular patterns will fall below the nyquist-shannon "critical limit" for sampling and you get moire. Higher pixel count sampling will allow those details to get smaller before this happens. Once sampled, downsampling to 2k might avoid aliasing. – Yorik – 2015-10-23T16:32:37.997

Your eyesight is 100% of what? – qasdfdsaq – 2015-10-23T20:38:32.240

1@qasdfdsaq: I guess he means 20/20 vision, "normal", neither exceptionally good nor poor. – Ben Voigt – 2015-10-23T22:48:47.683

1I wonder if screenshots would reflect this accurately. – Journeyman Geek – 2015-10-26T03:11:47.970