0

I recently had two hard drives that crashed in a RAID 5 array, I didn't configure any monitoring, so I didn't notice that one had been crashed for a while. So I decided to scrap everything and start from scratch.

All the hardware is the same as before, except I have fewer drives than before in my array, 3 bigger ones instead of 8. I've also installed Arch Linux as UEFI instead of using the legacy boot option, not sure if that affects anything.

I've re-installed Arch Linux, with proper mdadm monitoring/notifications and daily short SMART tests (and weekly long tests).

However, since re-installing Arch Linux, I've been seeing random kernel panics, usually after more than 48 hours uptime.

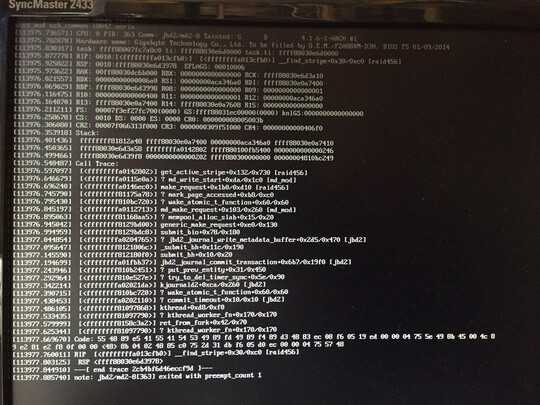

I've managed to snap a picture of the kernel panic:

Now from what I can see in there, it seems to be related with mdadm.

Here's my mdadm configuration:

Personalities : [raid1] [raid6] [raid5] [raid4]

md0 : active raid1 sda1[0] sdb1[1]

524224 blocks super 1.0 [2/2] [UU]

md1 : active raid1 sda3[0] sdb3[1]

1950761024 blocks super 1.2 [2/2] [UU]

bitmap: 5/15 pages [20KB], 65536KB chunk

md2 : active raid5 sde1[3] sdc1[0] sdd1[1]

5796265984 blocks super 1.2 level 5, 512k chunk, algorithm 2 [3/3] [UUU]

bitmap: 0/22 pages [0KB], 65536KB chunk

unused devices: <none>

Relevant line in mkinitcpio.conf:

HOOKS="base udev autodetect modconf block mdadm_udev filesystems keyboard fsck"

I'm currently on Linux akatosh 4.1.6-1-ARCH #1 SMP PREEMPT Mon Aug 17 08:52:28 CEST 2015 x86_64 GNU/Linux.

I've tried to re-seat my RAM, but I doubt it's a RAM issue has it was not happening before I've re-installed Arch Linux.

Most kernel panic issues that was related with mdadm that I've found in my research was occurring on boot. Any one has a clue on what could be the issue?

EDIT: Looks like this is a known bug introduced in 4.1.4 or 4.1.5: https://bugzilla.redhat.com/show_bug.cgi?id=1255509

I'll try to update to 4.2.0 in testing and I'll update this post with more information.