Download Text Crawler (It is freeware) and install it. Launch it after it is finished installing. In the Filename/Filter box type in "*.htm *.html *.php" or whatever the extensions of the HTML files that you are parsing are. In the Start Location box browse to the directory where the files are. By default it also scans subdirectories, if you don't want this functionality then you can click on Options then unselect "Scan Subfolders". In the Find box type in:

<a.*?href\s*=\s*["'](.*?)['"].*?>(.*?)</a>

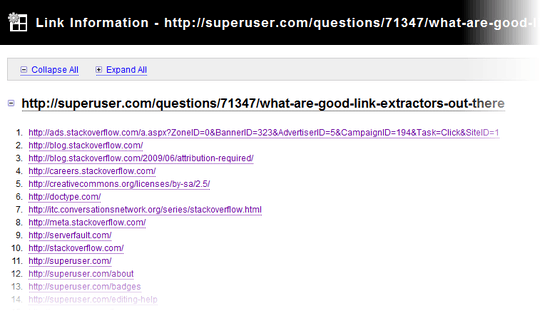

Make sure "Use Regular Expressions" has a checkmark next to it. Then click Find. It will show you all the links grouped by the files they are in. You can also click on Extract which will pop up a window with all the links from all the files. Since you stated that you want the links I figured you want the whole

<a href="something.php">Something</a>

so that you can see where the link points to and what the description is. If you only want the link without the whole tag, change the RegEx to

href=[\"\'](http:\/\/|\.\/|\/)?\w+(\.\w+)*(\/\w+(\.\w+)?)*(\/|\?\w*=\w*(&\w*=\w*)*)?[\"\']

which will return

href="something.php"

Let me know if this answers your question. TextCrawler is an awesome application and since it is free its worth a try.

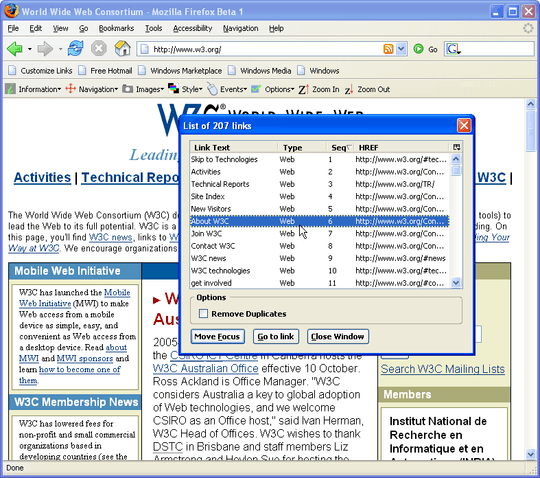

Yeah, know what you mean. Unfortuantelly, that's pretty much alike to what I was doing until now. Only now, I have about cca. 200 htm files, from which I have to get links, to compare some references ... long story short, I was hoping for some batch utility which can take all of them, and give me all links in one text file for me to rip apart. – Rook – 2009-11-16T19:49:11.440

Also, the links are not only html, but ftp, telnet and mail. The worse thing is I had a thing like that before, but now I can't find it anymore. – Rook – 2009-11-16T19:50:28.270

A quick Google turned up several options, including some free ones. I tend to prefer open source over "freeware", so I would probably search SourceForge.net for "URL extractor" as well. – JMD – 2009-11-16T19:57:19.007