Note: This answer is written with the assumption that the CPUs being compared consist of commercially-available Intel, AMD, and ARM-based SoCs from approximately 2006 to 2015. Any set of comparison measurements will be invalid given a wide enough scope; I wanted to provide a very specific and "tangible" answer here while also covering the two most widely used types of processor, so I made a bunch of assumptions that may not be valid in the absolutely general case of CPU design. If you have nitpicks, please keep this in mind before you share them. Thanks!

Let's get one thing straight: MHz / GHz and number of cores are no longer a reliable indicator of the relative performance of any two arbitrary processors.

They were dubious numbers at best even in the past, but now that we have mobile devices, they are absolutely terrible indicators. I'll explain where they can be used later in my answer, but for now, let's talk about other factors.

Today, the best numbers to consider when comparing processors are Thermal Design Power (TDP), and Feature Fabrication Size, aka "fab size" (in nanometers -- nm).

Basically: as the Thermal Design Power increases, the "scale" of the CPU increases. Think of the "scale" between a bicycle, a car, a truck, a train, and a C-17 cargo airplane. Higher TDP means larger scale. The MHz may or may not be higher, but other factors like the complexity of the microarchitecture, the number of cores, the branch predictor's performance, the amount of cache, the number of execution pipelines, etc. all tend to be higher on larger-scale processors.

Now, as the fab size decreases, the "efficiency" of the CPU increases. So, if we assume two processors which are designed exactly the same except that one of them is scaled down to 14nm while the other is at 28nm, the 14nm processor will be able to:

- Perform at least as fast as the higher fab size CPU;

- Do so using less power;

- Do so while dissipating less heat;

- Do so using a smaller volume in terms of the physical size of the chip.

Generally, when companies like Intel and the ARM-based chip manufacturers (Samsung, Qualcomm, etc) decrease fab size, they also tend to ramp up the performance a bit. This puts a hamper on exactly how much power efficiency they can gain, but everyone likes their stuff to run faster, so they design their chips in a "balanced" way, so that you get some power efficiency gains, and some performance gains. On the other extremes, they could keep the processor exactly as power-hungry as the previous generation, but ramp up the performance a lot; or, they could keep the processor exactly at the same speed as the previous generation, but reduce the power consumption by a lot.

The main point to consider is that the current generation of tablet and smartphone CPUs has a TDP around 2 to 4 Watts and a fab size of 28 nm. A low-end desktop processor from 2012 has a TDP of at least 45 Watts and a fab size of 22 nm. Even if the tablet's System On Chip (SoC) were connected to an A/C mains power source so it doesn't have to worry about power sipping (to save battery), a quad-core tablet SoC would completely lose every single CPU benchmark to a 2012 low-end "Core i3", dual-core processor running at perhaps lower GHz.

The reasons:

- The Core i3/i5/i7 chips are MUCH larger (in terms of number of transistors, physical die area, power consumption, etc.) than a tablet chip;

- Chips that go into desktops care MUCH less about power savings. Software, hardware and firmware combine to severely cut down to performance on mobile SoCs in order to give you long battery life. On desktops, these features are only implemented when they do not significantly impact the top-end performance, and when top-end performance is requested by an application, it can be given consistently. On a mobile processor, they often implement many little "tricks" to drop frames here and there, etc. (in games, for example) which are mostly imperceptible to the eye but save battery life.

One neat analogy I just thought of: you could think of a processor's "MHz" like the "RPMs" meter on a vehicle's internal combustion engine. If I rev up my motorcycle's engine to 6000 RPM, does that mean it can pull more load than a train's 16-cylinder prime mover at 1000 RPM? No, of course not. A prime mover has around 2000 to 4000 horsepower (example here), while a motorcycle engine has around 100 to 200 horsepower (example here of the highest horsepower motorcycle engine ever just topping 200 hp).

TDP is closer to horsepower than MHz, but not exactly.

A counterexample is when comparing something like a 2014-model "Haswell" (4th Generation) Intel Core i5 processor to something like a high-end AMD processor. These two CPUs will be close in performance, but the Intel processor will use 50% less energy! Indeed, a 55 Watt Core i5 can often outperform a 105 Watt AMD "Piledriver" CPU. The primary reason here is that Intel has a much more advanced microarchitecture that has pulled away from AMD in performance since the "Core" brand started. Intel has also been advancing their fab size much faster than AMD, leaving AMD in the dust.

Desktop/laptop processors are somewhat similar in terms of performance, until you get down to tiny Intel tablets, which have similar performance to ARM mobile SoCs due to power constraints. But as long as desktop and "full scale" laptop processors continue to innovate year over year, which it seems likely they will, tablet processors will not overtake them.

I'll conclude by saying that MHz and # of Cores are not completely useless metrics. You can use these metrics when you are comparing CPUs which:

- Are in the same market segment (smartphone/tablet/laptop/desktop);

- Are in the same CPU generation (i.e. the numbers are only meaningful if the CPUs are based on the same architecture, which usually means they'd be released around the same time);

- Have the same fab size and similar or identical TDP;

- When comparing all of their specs, they differ primarily or solely in the MHz (clock speed) or number of cores.

If these statements are true of any two CPUs -- for instance, the Intel Xeon E3-1270v3 vs. the Intel Xeon E3-1275v3 -- then comparing them simply by MHz and/or # of Cores can provide you a clue of the difference in performance, but the difference will be much smaller than you expect on most workloads.

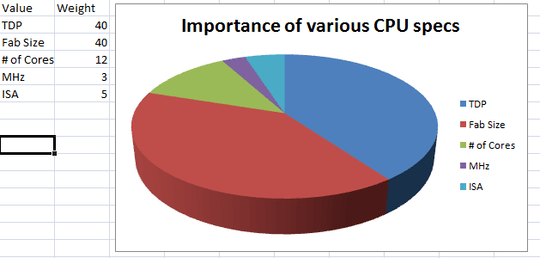

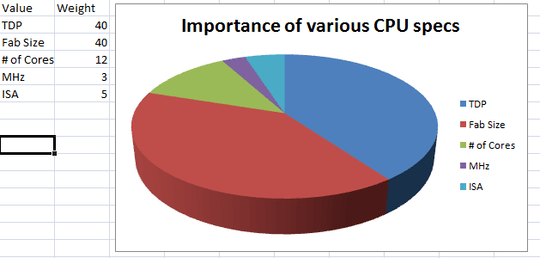

Here's a little chart I did up in Excel to demonstrate the relative importance of some of the common CPU specs (note: "MHz" actually refers to "clock speed", but I was in a hurry; "ISA" refers to "Instruction Set Architecture", i.e. the actual design of the CPU)

Note: These numbers are approximate/ballpark figures based on my experience, not any scientific research.

72Come on, people! That's not 5.4 GHz in total. That's not how it works! – Little Helper – 2014-09-08T14:23:46.730

3You don't indicate what type of CPU the Edge has. If its not an Intel/AMD x86 CPU then you cannot compare it to your HP or iMac for about a dozen different reasons. Why don't you just run any number of performance tests on 3 machines to understand the differences in the systems. – Ramhound – 2014-09-08T14:40:48.003

2@Ramhound the Galaxy Note Edge is basically an ARM phablet (smartphone/tablet). Its CPU perf is very likely to exceed any smartphone's perf to date. However, it is still a much smaller class CPU than desktop or laptop CPUs, and will thus not come close to matching them in performance. – allquixotic – 2014-09-08T14:43:32.877

21To elaborate on Little Helper's comment: You can't just add up clockspeeds on each core/die/chip and expect a cumulative level of performance. Most computer workloads are not adjusted for multi-processing. Analogy: One racecar going at 300MPH vs 10 cars going at 30MPH. Driving 10 cars at once won't make you go as fast as a racecar; you can only match the racecar if you have 10 places to drive to. The analogy breaks down due to locality and shared routes in physical space, so don't try to read too deep into it, but the basic idea is there. – joe – 2014-09-08T20:34:07.757

5https://en.wikipedia.org/wiki/Megahertz_myth – gronostaj – 2014-09-09T18:15:41.607

1@LittleHelper You'd be surprised, you'd be damn well surprised about what kind of people have shocking misconceptions like that: Accredited professors, college graduates, experienced senior developers... – Traubenfuchs – 2014-09-10T07:15:09.503

1@joe your racecar analogy is wrong. If you have to drive 10 miles in total and you have 1 car that does 60mph, it will take 10 minutes. If you have 10 cars, it will take 1 minute to accumulate 10 miles. Now add the time it took to assign the drivers to the cars and now the analogy works. – Gusdor – 2014-09-10T12:19:43.537

1Its probably best to understand the difference between any two processors first. What makes an AMD Opteron and an Intel Xeon and an Intel Celeron different? They have slightly different ways of achieving their goals even though they're mostly compatible processors, so people benchmark them to see how they really perform. The same is true of mobile processors. – mikebabcock – 2014-09-12T14:04:21.010