8

0

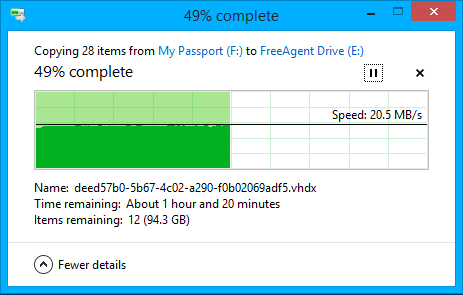

I was copying a few very very large files to my computer. In total, 28 files and roughly 200GB (meaning each one was . I noticed that it was going at more or less the same speeds the entire time:

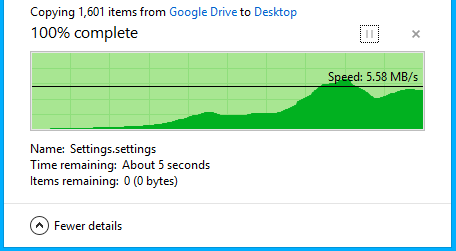

However, transferring multiple small files has much larger fluctuations in speeds:

(Yes, this is from google drive but they're all locally downloaded to my computer)

It's got to do with the overhead of file transfer. Small files may require random disk access and the transfer speed also depends on the disk fragmentation. More info here

– Vinayak – 2014-09-07T02:17:13.400@Vinayak Why would you need a random disk access/what would it be used for? – Jon – 2014-09-07T02:29:51.540

Files aren't always stored sequentially. This video explains it quite well.

– Vinayak – 2014-09-07T02:36:28.590I understand how defrag work, but why would small files require random disk accesses? – Jon – 2014-09-07T02:44:01.433

1Because to reduce disk fragmentation, the files will will be written to wherever free space is still available within the HDD. For instance, there might be a 10 MB free space somewhere between two large files. The 10 megabytes of space isn't enough to store a large 5 GB movie, but it can accommodate hundreds of small, 1-100 KB files (text files, INI configuration files, documents, GIFs, etc.). When that block is filled up, the HDD will look for other small blocks of free space that it can use to fill with data. – Vinayak – 2014-09-07T02:49:43.423

A better example here – Vinayak – 2014-09-07T03:23:30.243