11

4

[Latest update: benchmark program and preliminary resuls available, see below]

So I want to test the speed/complexity tradeoff with a classic application: sorting.

Write an ANSI C function that sorts an array of floating point numbers in increasing order.

You can't use any libraries, system calls, multithreading or inline ASM.

Entries judged on two components: code length and performance. Scoring as follows: entries will be sorted by length (log of #characters without whitespace, so you can keep some formatting) and by performance (log of #seconds over a benchmark), and each interval [best,worst] linearly normalised to [0,1]. The total score of a program will be the average of the two normalised scores. Lowest score wins. One entry per user.

Sorting will have to (eventually) be in place (i.e. the input array will have to contain sorted values at return time), and you must use the following signature, including names:

void sort(float* v, int n) {

}

Characters to be counted: those in the sort function, signature included, plus additional functions called by it (but not including testing code).

The program must handle any numeric value of float and arrays of length >=0, up to 2^20.

I'll plug sort and its dependencies into a testing program and compile on GCC (no fancy options). I'll feed a bunch of arrays into it, verify correctness of results and total run time. Tests will be run on an Intel Core i7 740QM (Clarksfield) under Ubuntu 13.

Array lengths will span the whole allowed range, with a higher density of short arrays. Values will be random, with a fat-tail distribution (both in the positive and negative ranges). Duplicated elements will be included in some tests.

The test program is available here: https://gist.github.com/anonymous/82386fa028f6534af263

It imports the submission as user.c. The number of test cases (TEST_COUNT) in the actual benchmark will be 3000. Please provide any feedback in the question comments.

Deadline: 3 weeks (7 April 2014, 16:00 GMT). I will post the benchmark in 2 weeks.

It may be advisable to post close to the deadline to avoid giving away your code to competitors.

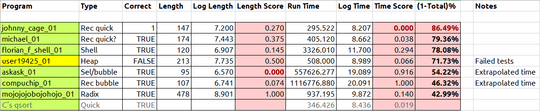

Preliminary results, as of benchmark publication:

Here are some results. The last column shows the score as a percentage, the higher the better, putting Johnny Cage in first place. Algorithms that were orders of magnitude slower than the rest were run on a subset of tests, and time extrapolated. C's own qsort is included for comparison (Johnny's is faster!). I'll perform a final comparison at closing time.

3Can you provide the benchmark? Different sorting functions perform differently based on the nature of the data. E.g. bubble sort is faster than the stdlib quicksort for tiny arrays. We might like to optimize for your benchmark. – Claudiu – 2014-03-14T17:20:57.793

@Claudiu I once saw a lovely short version of quicksort, that ran just as well as any other on data where every element was different. But if some elements were the same, it ran at an absolute snail's pace. I'm not talking about the known issue of bad choice of pivot in sorted / partially sorted arrays. My test data was completely randomly shuffled. This particular version just didn't like duplicates. Weird, but true. – Level River St – 2014-03-14T17:37:14.583

I haven't written it yet :-) See edit though. – Mau – 2014-03-14T17:37:54.617

Ok if you want to have a metric that considers both length and runtime, but it's not clear how much weight you're putting on each. length * runtime might be clearer than xlength + yruntime, where x and y are unknown to us. – Level River St – 2014-03-14T17:42:17.937

@steveverrill: x and y are 1 (same weight). length and runtime are normalised based on best and worst entries in each category. Is there any unfairness in this? – Mau – 2014-03-14T17:45:37.147

3Welcome to PPCG! While we don't prohibit language-specific challenges, we do strongly encourage formulating questions in a language-agnostic manner whenever possible. Consider it for your next question, and have fun with this one! – Jonathan Van Matre – 2014-03-14T17:55:35.113

X in characters? ok. Y in milliseconds? seconds? hours? weeks? On your machine or on mine? A short slow program will do well if you measure in hours, and a long fast one if you measure in milliseconds. Hence my suggested metric of length*time which if you log it becomes log(length)+log(time) so your units of time and the speed of your machine become irrelevant. – Level River St – 2014-03-14T17:55:53.837

@JonathanVanMatre: thank you! steveverrill: normalisation makes it machine independent already, but you have a point about the possibility of a large range of different values. Changing the scoring. – Mau – 2014-03-14T18:02:55.927

@Mau It doesn't make it machine independent. It just makes it a little less dependent on processor speed. It does not take into account different amounts of registers, caches, out-of-order execution, ... – Howard – 2014-03-14T18:45:39.280

1@steveverrill: I don't follow. It doesn't matter what your unit is because you scale it from 0 to 1 anyway. If min is 1 hour and max is 3 hours, something that takes 1.5 hours will be 0.25 regardless of whether min is 60 minutes, max is 180 minutes, and it takes 90 minutes – Claudiu – 2014-03-14T19:13:33.900

@Howard: fair enough, but all programs will be run on the same machine. – Mau – 2014-03-14T22:10:50.807

Can we use SSE instructions ? – Michael M. – 2014-03-14T23:57:33.530

@Michael I'm sure OP will compile with

-march=native, but, as stated in the rules, you cannot use inline assembly – mniip – 2014-03-15T05:12:09.860No inline assembly :) – Mau – 2014-03-15T11:52:34.637

@Mau Can I use

void sort(float values[],int n)? I feel more comfortable in that and I guess that both are same BTW. – Mukul Kumar – 2014-03-16T03:01:14.4401OP only said no inline assembly - he didn't say anything about intrinsics. – Paul R – 2014-03-16T09:11:10.220

Any minimum size? Can it fail if n=0? – Florian F – 2014-03-16T18:11:41.667

Well I'd say n=0 should be covered, like in any well-behaved algorithm :) – Mau – 2014-03-16T22:06:57.910

You should clarify whether the mandatory function signature is included in the count of "those in the sort function". There are entries counting each way. – Jonathan Van Matre – 2014-03-19T11:51:17.820

@JonathanVanMatre: thanks, edited. – Mau – 2014-03-19T13:48:34.610

It's probably not a big deal, but which Ubuntu 13? – Kendall Frey – 2014-03-20T13:09:00.477

13.10. Stock. With the yellow salamander as background. – Mau – 2014-03-20T13:57:20.660

Really looking forward to seeing how these perform! – Claudiu – 2014-03-26T22:03:33.253

So any Whitespace program will have a score of −∞, because the logarithm of zero tends to negative infinity? – wchargin – 2014-03-27T23:23:54.090

@WChargin any Whitespace program would not make it to the test bench :) – Mau – 2014-03-27T23:26:55.843

Your benchmark should not allow this: void sort(float*v,int n){ while(n--) *v++=0; } – Florian F – 2014-04-05T19:29:36.717

O(n) performance would stand out too much :) – Mau – 2014-04-05T19:59:26.127

So? No final results? – Florian F – 2014-05-11T12:08:54.947

Sorry guys, Linux box died :( One on its way soon. I took a snapshot of submission as of deadline. Will be up soon! – Mau – 2014-05-11T13:26:34.513